Will 2024 Elections Be Safe From AI? | AI IRL

The rise of artificial intelligence, is raising fresh fears about technology's role in distorting the truth. It's setting up a test of the strength and resilience of democratic institutions across the globe. And it comes at a time when many governments are grappling with the power of social media, and its ability to stoke division.

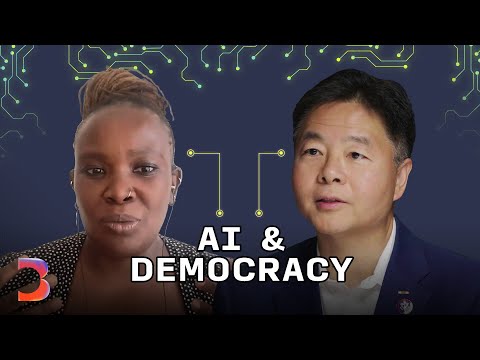

So in an effort to sidestep mistakes of the past, lawmakers are racing to put up guardrails for a technology they don't fully understand, while AI leaders vie for a bigger role in shaping them. Now, both camps don't exactly have a great track record here, so why would AI be any different? In this episode of "AI IRL," we'll explore what's at stake for democracy, if it isn't. Congressman Ted Lieu, thank you so much for joining us. So you're a representative of California.

It's home to some of the biggest companies leading the AI boom, and it's an exciting time in technology, but also a somewhat precarious chapter in society. You know, we saw how social media undeniably played a role in eroding the public's trust in institutions. What risk do you think AI poses to democracy? As a recovering computer science major, I am enthralled with AI. It is going to move society forward.

It already has. There's already some big risks that we also see, and we have to mitigate those risks while reaping the benefits. So in terms of democracy, AI is very good at doing fake videos, fake voices. If you give an AI algorithm half an hour's worth of your voice, it'll sound just like you, Jackie.

If you give it two hour's worth of your voice, your family members can't tell the difference. And then you can see how that could be quite disruptive to democracy, and just to regular scammers in everyday life where people might get tricked by AI. And how worried are you? I'm somewhat worried. Because when social media first came out, people were fascinated by it, they took it seriously, and then over time they realized, "Oh, we shouldn't trust everything we see on the internet."

And that's what I want people to see with AI and the internet. They need to have some skepticism, and not trust everything that they see, and do some double checking. I mean, it's not new that legislation plays catch up with technology a lot of the time. We saw that with, even over the last 20 years, Napster, more recently looked at something like the Cambridge Analytica scandal with Facebook, Meta now. And I wonder, "Should we believe that AI can be any different in terms of how legislation can help?" So my view is, AI is like the steam engine, which was quite disruptive when society first saw it work.

It's gonna become a supersonic jet engine in a few years, with a personality. And I don't think we're ready as a society, or as a government to see all their amazing benefits of AI, but also the disruptions. For example, in terms of the labor force, AI is simply gonna eliminate a number of jobs, or make a number of more efficient. Well, what do you do with that? Do you go to a four day work week? It's my vote that we do that. Or do you fire a quarter of your workforce? Or do you make them work 25% harder? These are all decisions that we have to make.

And I'm not sure we're ready for that. One of the things people are concerned about, is that being able to discern the veracity of the audio or images that we see online is just going to get even more difficult as AI gets more sophisticated. Do you think AI has the potential to decide the 2024 election? I don't think so. I honestly think it's gonna be decided on one issue, abortion, and I don't think the Republicans are gonna be able to overcome that issue. But AI can be disruptive in putting out fake videos, and making people think that, for example, Joe Biden said something that he didn't, or that Donald Trump said something that he didn't. And again, I want people just to be somewhat skeptical when they see videos, especially political videos.

When it comes to reign in AI, you and some lawmakers have proposed that a national commission take a deeper look into what actually needs to be regulated before actual regulation is written. Talk to us about your thinking there. So I've introduced bipartisan legislation, that will create a national bipartisan blue ribbon commission to make recommendations to Congress as to what kinds of AI we might wanna regulate, and how we might want to do that.

There's precedent for this in the DOD military side. There was an AI commission that did quite well, made some good recommendations. This is the same for the civilian sector.

And when we look at AI, because it's so massive and so broad, I think we need a commission that is transparent, that can narrow down the issues we have to focus on, and then give us some recommendations how to go forward. But is there a risk that we missed the window to regulate it because we're busy creating a group to structure that regulation where AI is just, you know, it's going to keep going. So the intent of this commission is not to delay regulation. It's pretty quick. If it becomes law, it requires a report within six months, and a second report within a year. And then in the meantime you can continue to pass legislation.

So there's certain elements of AI that you don't need a commission to talk to you about. For example, AI that can destroy the world. So right now there are- How do you even start there? So there are these weapons, in the Department of Defense known as autonomous weapons, weapons that launch automatically. So I've introduced bipartisan legislation that basically says, "No matter how amazing AI ever gets, it can never launch a nuclear weapon by itself.

There's gotta be a human in the loop." So that can proceed and become law, and other sorts of bills can become law, while this commission looks at the broader pictures of AI. You've talked about tapping experts to help you kind of develop some of that understanding. And Congress people have invited people like Sam Altman and other tech leaders on the Hill to really talk about their view on how this technology should be regulated.

But do you think that lawmakers have gotten too cozy with some of these tech leaders? That is always a risk. So I worked with the vice chair of the Republican caucus, and the vice chair of Democratic Caucus. And we brought in Sam Altman for a bipartisan dinner. It was a terrific dinner.

He was very instructive. And I thought it was very helpful. I also made a point to bring in other civil society experts who had different views, views of AI risks and AI bias to make sure that members were getting both sides of AI. This AI National Commission bill that you put forward it's a bipartisan effort, but I'm curious what your thoughts are on how a Republican administration would regulate AI.

I think the best way to think about AI is, it's not a human being, it's not sentient, it's a tool. And tools can be used for good purposes or bad purposes. So there's nothing particularly partisan to me about AI, or about technology in general. And that's why I think if you look at Congress, we've done a number of bipartisan bills, for example, on cybersecurity. And to me, AI is in a similar realm, where it's not really a partisan issue.

It's how do we wanna make sure that we reap the benefits and mitigate the harms. Do you think it's a unifying issue in some ways? It could be. So, you know, we don't want, you know, AI to destroy the world. So hopefully we can unify on that.

Do you think that AI can help with some grassroots campaigning? Right. Like write me a fundraising email in the style of a Taylor Swift song or something. Yeah. Yeah. Would pay attention to. AI could totally do that.

And so I am assuming both political parties are gonna be using AI not only in this election, but every upcoming election. But when you mention Taylor Swift, that was interesting because part of their problem with AI, is how do you monetize what is happening? You have these large language models, that are scouring the entire internet to train themselves. Part of the internet are copyrighted Taylor Swift works.

Do you compensate her for the training of AI? I don't know. Then the second question is, "Well, this AI algorithm generates things that sound like Taylor Swift lyrics, do you compensate Taylor Swift?" I don't know. Well, on the topic of kind of the relationship that the technology industry has with Washington, it's undeniably going to have to be much more intertwined, given everyone is still ramping up their understanding of what AI is actually capable of doing.

Do you think leaders like Sam Altman, are in some ways more powerful than lawmakers themselves? I don't. I think he's helpful in providing information, and providing insights into how these large language models work. And I think it might be good just to sort of step back and give you my view of how I look at AI as a lawmaker. And to me, the best analogy is sort of two bodies of water.

You have this large ocean of AI, and then this small lake. So a large ocean of AI, is all the AI we don't care about as lawmakers. So if your smart toaster has a preference for bagels over wheat toast, we don't care. But in this small lake, are the AI aspects that we care about. So one of them would be destroying the whole world. Second bucket would be AI that isn't gonna destroy the world, but can kill you individually.

So there's a lot of AI for example, in automated cars. And if your cell phone malfunctions, it's not going 40 miles per hour. But if an automated vehicle in AI malfunctions, it can kill you. It's killed people. And there's a lot of AI in moving things, planes, trains automobiles. And my view is we need to have more federal regulators more attuned to unique aspects of AI to deal with those specific issues.

And the last bucket is the hardest, which is AI algorithms have some sort of bias that's harmful to society, or harmful to individuals, whether it's hiring algorithms, or algorithms that deal with bank risks and credit risks. That we have to grapple with. And that's much more complicated. Moving forward, do you think artificial intelligence will fortify democratic institutions or erode them? I have no idea. And I think- I love an honest answer.

I really love an honest answer. That's a great question. I don't think anyone predicted how social media was going to look like decades later. I do know that most people aren't particularly happy with how it turned out, which is why I think you have more of movement in Congress to try to regulate AI, because no one wants AI to look like social media decades later. So you definitely think some regulations should be in place before the 2024 election.

I do. So for example, one of those is disclosure. I support legislation requiring disclosure of who pays for social media ads. If you watch a federal TV commercial, at some point, if I do a commercial, it's gonna say, you know, "I'm Ted Lieu, and I approve this message." Well, in social media ads, you have no idea who paid for it. So for example, now, if Russia is gonna pay for an ad supporting Donald Trump, at some point it's gonna have to say, "Paid for by the Kremlin."

And so people will know, "Oh, that ad was paid for by this organization, or this country." Nanjira Sambuli , thank you so much for joining us. So you're a policy analyst and a researcher studying the impact technology has on institutions, diplomacy, media, and entrepreneurship, culture, all of it.

And you have a particular expertise in Africa. Could you tell us a little bit about how you're seeing AI play out in the region? Thanks for having me. AI is playing out in both the good and bad, I guess the double-edged sword way that we'd expect.

On the good side, we are seeing exciting innovations in use of voice in Rwanda, in Kenya, in Uganda, where we are seeing local languages being captured in the voice databases that are then being used for service provision. In the case of Rwanda, I've heard from the government that they're using that voice that has been recorded through AI projects with civil society to expand service provision to local communities, which is exciting. On the other side, you know, we have the usual markers. Disinformation is propagated faster by technologies that are able to be, you know, co-opted for such purposes.

In Kenya, for example, during our last election, we had a very, I guess familiar situation where obviously bots or disinformation for higher accounts were proliferating, and actually selling the information environment. Are there any specific countries? You mentioned Kenya for example. Are there any other kind of country level examples that you can give us around how AI makes its way into democratic institutions? I think quite large, any country on the continent that is leveraging social media is the first entry point. So Kenya, Nigeria, South Africa, practically, most countries on the continent do not face wanton internet shutdowns during moments like elections.

We have seen a variation of these tools, sort of being leveraged either by, you know, politicians or other groups, that have been interested in trying to sell you the information environment. And it hasn't just been tied to elections. We have seen anti LGBTQ messaging really proliferate. We have seen messaging against, or trying to sort of intimidate, you know, pro rights groups.

We've seen, and some of that has also not just been from the continent. We've seen, I think there's a case of in Kenya and a few other countries, it's foundation affiliated right-wing groups that were leading these anti-abortion messagings that were proliferating everywhere. Then we have the case where it's particularly, you know, very prevalent in on the continent, and I think across all countries, with what we call dark social. So groups like WhatsApp, where these messages are then taken, and we can't see the effect of that. And I think the most interesting one, was even just the speculation of use of AI in Gaban, during the 2019 elections there, where there was a rumor that there was a deepfake video of a president who hadn't been seen in public.

He had issued an address to the country. And that in itself, the speculation around whether it was a deepfake or not, led to a coup attempt then. So I mean of the countries in Africa that are using AI, which which are equitable? Ha, equitable AI, the elusive such for equitable AI. That's a hard to tell, because I guess it depends on how we are gauging that. Is AI being deployed by governments, say for service provision? We're starting to see, you know, signals towards that.

So some countries like Rwanda, Mauritius and others, are signaling AI strategies. A country like mine in Kenya. There has been a program run by the government, that we didn't even know about, that is trying to use automated decision making to allocate affordable housing. So on that level, we're starting to see that how transparent, how accountable, how equitable these systems are, in administering what they're supposed to do, remains an urgent task for us to sort of audit. When do you audit? Do you audit after the effect? Do you audit before it's deployed? During all these are mechanisms that we have to develop in real-time and have very contextual conversations around? In the space of social media, obviously it's not equitable because many of these companies I think actually all of them are not, they're not accountable to our regions. In Kenya, for example, one of the most interesting cases that you wouldn't think to tie to the AI discourse as we have it, but is very tied to, is very relevant to it actually, is the case of the labor force that has trained ChatGPT and other AI systems to actually label this data.

So the workers who have been, who had been employed by a third party, that had been contracted by open AI, Meta, have been taking those companies to court for the labor, the adverse labor practices, and the, you know, everything from what has been, human trafficking has been mentioned, PTSD, lack of care considerations, just precarious labor working environments have been mentioned. So that, in that regard, obviously that is not equitable. I'd like to take a step back. Because you make a good point, in kind of illuminating these different examples that are proliferating across countries in Africa. And we often take such a US-centric standpoint, because that's really where a lot of these companies who are creating AI technology are based.

And you've said that Africa's digital transformation, is really a frontier for the geopolitics of digital technologies broadly. How are you seeing this play out with AI? And what can the US learn from it that perhaps we're just underestimating? Africa and perhaps Asia and Latin America, currently are in an interesting position where everybody that is the US and China writ large, are trying to get them to fashion their transformation pathways to their image. So you have either the kinds of technologies you're told you can use.

I think Huawei was familiar in the US context, being banned in US. That argument to African countries is moot because that company in Chinese technology has been the backbone of the infrastructure that has connected us today. But at the same time, while they have the hardware element locked, the social media, the application layer is predominantly US companies that we are leveraging, and then the entry of TikTok.

So those geopolitics of decoupling, then tend to be moot in our context. With AI, but what that translates into practice remains to be seen. Because a lot of this tends to follow a cut and paste model, you know, erase Europe, add Africa in there and then launch it, shake hands and we call it a strategy. But is it representative of the, not just the governments, but other stakeholders who are supposed to buy into it, and be guided by that in AI adoption? So there's a lot of that rustling that we don't, and jostling I should say, that informs a lot of what is called African digital policymaking.

But I think what has been emerging more and more from African policy makers, is that for them, the most urgent task is how to leverage digital to deliver dividends, addressing jobs, addressing climate change. We have a climate summit going on in Kenya right now. The big question would be, if you say AI, what does that have to do with the climate urgency that we have to do? So those practical questions still color and, and bring an interesting pushback to the typical geopolitical conversation on technologies. There's one interesting thing that I've, well one thing I find particularly interesting about Africa as a continent, is the number of languages and regional dialects that that are spoken by people, often by groups that aren't very large. And although we sort of briefly touched on this at at one point, I wonder if you think AI could help either bridge the gaps between communities, or languages spoken, whether that's to help spread information, or just, you know, in general, help people feel better informed about what's going on around them? More representing.

Yeah, exactly. Oh absolutely. That's where I can definitely, you know, smile and give a positive outlook because the diversity of languages also means diversity of knowledge, wisdom that hasn't been, you know, captured, that can help us, you know, reorder the world. AI technologies like the Common Voice Project that Mozilla and others have been running, can help us capture that knowledge, again, with the conditions that this is not just for extractive for some company somewhere to go and sell some intellectual property, limited sort of like you know, bounded instrument, but it'll actually help us build a new commons that is more representative of the world, and representative of a majority of people who have not informed how the world is ordered today. To shift it back to the United States for a bit, one of the fears swirling around AI right now, is that it could further erode the public's trust in institutions here, especially as we have a democratic, or an election coming up in 2024. Do you think in some of the countries you spoke about, do you think that AI can actually help empower people to participate in the political process? I think it can, but again, it's not just about the technology itself.

I think AI is just the latest iteration of technology showing us that the intrinsic motivation driving the use, their adoption, and their iteration, is what determines that outcome. So for example, it turns out that, you know, the popular chatbots ChatGPT Bard and others, are so easily hackable, to actually drive a different agenda from what is bounded as a response mechanism. If we tell people, rely on, I don't know, a chatbot to let you know about the intellectual process, if that is hacked, and it feeds them misinformation, we sort of fed them to the wolves.

And you know, working that back could be, you know, it could be difficult. And oftentimes you don't think about that. A tool, not a replacement. Yeah. Assistance. And I mean on that note, do you think, as far as Africa, and African countries are concerned, do you think it's the democracy that needs to change, or technology? Both. Both. They both need to change.

You know, the challenge we're having in this decade is really legged bare for the continent, is the tick box has always been elections. And we are seeing that the more and more other, you know countries are having elections, the less and less demographic, sorry, democracy dividends are being delivered. Nigeria just had its own moment. In fact, just today as we are speaking, they're waiting to find out the rulings from a tribunal that was set up to, you know, to assess whether there was a fair, free fair election.

And we are seeing a lot more of that. You know, western Central Africa we're seeing coups happening post electoral processes, or democratic processes. There's a lot of, you know, grievance there and we need to listen to that. A lot of that has been argued that as a young people who are discontent to the way the world is ordered, there's a valid point there that we need to assess. Not to talk down to too, but to really understand where these grievances are.

I like to say when we think about the world today, for my, for a younger generation on this continent, they're living in an age where many cans have been kicked down the road, and they're starting to face them even before the, you know, found their way in the world. Will technology help them make sense of it, or will it actually aggravate and, you know, give, you know, power holders more tools to control them? These depend a lot on how we order the society. So both things have to be thought of in parallel. And I like to say, you know, "We can walk and chew gum at the same time." I mean, very less poignant for me, is we have seen as, as you say, a number of military coups in Africa in recent times. Is AI something that is, that's playing a central, or even a peripheral role in those, whether for good or or to counter them? Well certainly where bots and, you know, disinformation for high accounts exist, they have been used to pass certain messages, or seed certain messages in certain communities.

I mentioned Gabon where there was even the illusion of a deepfake. It was analyzed later and found not to be a deepfake, but that was after a coup attempt had happened, because it was believed to be so. That message had already spread. So it was too late. We are... It was too late. I mean, now that, you know, that we can fact check after the fact, but if people were willing, there's a readiness to believe that, speaks to other, you know, discontentments that, you know, the perfect opportunity arose to feed that.

I wouldn't want to make it a causality, but there's absolutely something there that speaks to the fact that the way AI works is delivering in the way that society is already primed for it to operate.

2023-10-24 12:36