Webinar on Leveraging Digital Health Technologies to Address the Needs of Underserved Populations

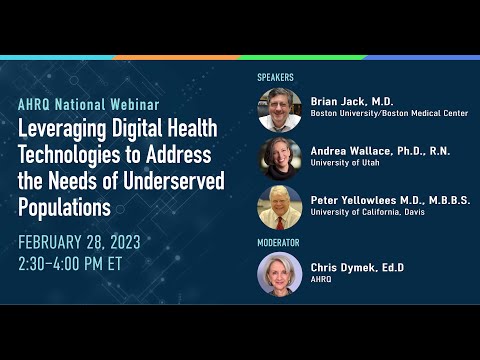

>> Welcome to the Agency for Healthcare Research and Quality's webinar on Leveraging Digital Health Technologies to Address the Needs of Underserved Populations. Although a few people are still logging in, we're going to get started on time. My name is Chris Dymek, and I'll be moderating today's webinar. I currently serve as the Director for AHRQ's Digital Healthcare Research Program, which is part of the Center for Evidence and Practice Improvement at AHRQ. Our program's mission is to produce and disseminate evidence about how the evolving digital healthcare ecosystem can best advance the quality, safety, and effectiveness of healthcare.

To fulfill this mission, the program funds research that yields actionable findings around which digital healthcare technologies can improve care for our key stakeholders, patients, clinicians, and health systems. We'll discuss three of these exciting research projects during today's webinar. Next slide, please. The agenda for today's webinar is positioned here for you. Please note that we are recording this webinar and that the recording and slides will be available on the AHRQ Digital Healthcare Research website in a few weeks. You'll be notified by e-mail once they're available.

Next slide. We're pleased to have with us today an esteemed group of presenters. They include Dr. Andrea Wallace, Dr. Brian Jack, and Dr. Peter Yellowlees.

This webinar is accredited by AffinityCE. If you're interested in receiving continuing education credit for participating in this activity, information on how to claim your credit will be presented at the end of the presentations. It will also be e-mailed to you after this webinar.

For the purposes of accreditation, let me note that AHRQ, SD Solutions, and AffinityCE have no financial interest to disclose. Drs. Wallace, Jack, and Yellowlees also have no relevant financial interest to disclose.

Also, please note that no commercial support was received for the development of this learning activity. Next slide. Just a brief note about questions.

We've reserved time at the end of the presentations to address participant questions. However, during the presentations, feel free to submit questions that you have for presenters using the Q&A panel located on the right side of the PowerPoint slides. Please include the presenter's name or their presentation order number with your question. As a reminder, participants are in listen-only mode, so to ask questions, you'll need to use the Q&A panel.

Next slide, please. This slide shows the learning objectives for today's webinar. By the end of this presentation, you should be able to, first, explain how using an electronic social needs screening tool in the emergency department can improve referrals for patients in need and monitor population health post-discharge. Second, discuss how an innovative artificial intelligence communication system can identify and mitigate health risks for young African-American women prior to pregnancy, thereby reducing health disparities in birth outcomes. And third, describe the potential impact of telepsychiatry consultations with automated interpreting on mental health services for patients with limited English proficiency. Next slide.

And now it's my pleasure to introduce our first presenter, Dr. Andrea Wallace. Dr. Wallace is an associate professor and associate dean for research at the University of Utah College of Nursing.

Her research focuses in designing healthcare service interventions aimed at narrowing gaps in clinical outcomes while simultaneously understanding how these interventions can be implemented during routine care or without research resources. Dr. Wallace regularly serves on federal review panels with a specific focus on social health integration and implementation science.

Most recently, Dr. Wallace's AHRQ and National Institutes of Health-Funded Research has focused on how to assess and act on the social risks and needs of patients and family caregivers during in-person and emergency department discharge planning. And now I'd like to turn the control over to Dr. Wallace. >> Thank you.

It's an honor to be with you all today to present this work. I sincerely hope this is helpful to anyone interested in implementing social needs screening and referrals in clinical settings. Nothing I present today is done without help from my research collaborators, community partners at Utah 211, and the University of Utah staff. It's been very fortunate to receive funding from AHRQ and currently from NINR. I have no conflicts, financial or otherwise, to disclose. So first, I'd like to begin with the why of this work.

So this is a map of urban Salt Lake City where the University of Utah is located. What this map illustrates is the 10-year difference in life expectancy between where I live in the university neighborhood and where I buy my coffee just down the hill in urban downtown. And we have important clues about the reasons for these health disparities.

In fact, if you do a Google search for social determinants of health, you'll pull up many colorful wheels just like this, each with slightly different percentages. But the point here is there's overwhelming evidence of social circumstances. And here I'd argue that environment, behavior, and medical care, also byproducts of social circumstances, are responsible for the majority of health outcomes. Now, of course, biology and genetics are important, but the vast majority of health and thus health disparities are socially constructed phenomena. And also foundational to what I present today are three important definitions that are often used interchangeably but need more precision before I launch into the details of our work today.

So we've likely all seen this definition of social determinants of health or SDOH or social drivers of health. But one very important nuance to realize is that social determinants are both positive and negative. We are all subject to social determinants. It's the social context in which we all live. The health of everyone listening today is subject to social determinants.

A good example of a social determinant is social support. There are vast data showing the importance of social support in health outcomes, but depending on the circumstances, it can both positively or negatively impact health outcomes. In contrast to social determinants, social risk factors negatively affect health. An example I'd like to use is adverse childhood events, or ACEs.

We know ACEs are associated with poorer health outcomes, but at the level of it being a risk factor, we don't yet have actionable information when someone walks into our clinical settings with a high ACEs score. And social needs are our most tangible, more immediate, and ideally more actionable. They are the needs of an individual as a result of social determinants of health.

There are many examples, but some include currently having money or transportation to get prescribed medications or utilities to keep the fridge running and the insulin good. So with this, the research I talk about today is about social needs. And this is the model we've worked with that recognizes that effectively addressing social needs in clinical settings requires, first, high-quality, accessible screening approaches that can take place in clinical workflow.

Second, it requires means of effectively communicating the information to clinical teams in a way that informs clinical decision-making. And third, it requires effectively linking patients with resources that can meet their needs at home. We need to recognize that while we can start the process of understanding needs in clinical settings, addressing those needs requires commitments and connections far beyond screening during a clinical encounter. And because social determinants work has been moving fast in healthcare, colleagues at UCSF and their SIREN network assembled this report last summer to give us an update of social needs screening in the U.S.

This is a very accessible report for those interested, but primary findings included that there is a ton of variability in terms of how screening is being implemented. And while patients and providers generally find the purpose of social needs screening acceptable, they have some serious concerns about privacy and a lack of resources with which to respond. And this is on a larger arena where health systems are responding to new regulations. CMS is mandating social needs to be assessed and acted upon as part of inpatient care, and health systems, because they know that responding will require linkages to community resources, are seeking ways to understand the outcomes of referring to systems outside of healthcare's EHRs.

But this effort is bringing in competition from proprietary systems and raising questions about patient privacy in a landscape where state health information exchanges have had difficulty getting up and running. And in fact, there are state-level efforts to adopt legislation mandating patient consent before sharing social needs and outreach information back to health systems. So in that landscape, I place our UHealth research program. Our purpose since this work started in 2017 has been to develop means of ethically and effectively identifying social needs that can be used in routine care when the work isn't necessarily supported by dedicated research staff, to facilitate access to community-based resources and integrate clinical and community-based data. To date, we've screened over 20,000 patients across multiple studies, and our work is grounded in a partnership with Utah 211, which is part of a national network of community service referral centers specializing in offering connections to free and low-cost community-based assistance programs, for things like food and housing, to all those who dial 211. And the overview of how we've done this is actually quite straightforward.

Using low-cost, readily available HIPAA-compliant tools, in this case REDCap, available in most academic health centers, staff screened for patient social needs using what is now the validated 10-item Sincere Screener. The citation about the screener psychometrics is included here, and I'm happy to share it. We referred those who have needs and want outreach to a 211 resource specialist who aims to contact patients via phone, text, or e-mail within 48 hours. And we compiled data from inside and outside the health system to understand needs and community outreach processes. Our partnership with Utah 211, which has robust encounter and resource databases, has allowed us to do all this.

And of course, this is displayed differently on the touchpads, but this gives a visual of what is administered. On the left are questions from the 10-item Sincere Screener, and on the right is information gathered from those who would like to be contacted by 211 for service connections after discharge. And we have gathered and continue to gather quantitative and qualitative information from patients, staff, and 211 resource specialists to understand the challenges and implications of integrating social needs screening into clinical systems. So let's first talk about some numbers of what we've seen from this work.

In our Digital Health R21 funded by AHRQ, we screened ED patients for social needs over 15 months and focused on evaluating our efforts using an implementation science approach. We followed the number of patients approached, completed screenings, positive screenings, and referrals from 211 to understand the potential population health impact. And here we show what might be expected if systems implement screening into routine care without dedicated staff and mandated screening.

About 10 percent of potential ED discharges were approached for screening by staff. The good news is that the screening is relatively easy to complete. It takes about one minute, and most finish the questions. Now for the other news. We saw and continue to see that over 40 percent of our patients screened in our university ED have one or more needs, but only 40 percent of them want outreach.

Of those who want outreach, only 30 percent connect with 211 for help. In the end, that means less than 10 percent of those who state they have social needs actually engage in a way to get help. And here we see the breakdown of stated needs from the most to least common. In our psychometric analysis of the Sincere Screener, it's important to note that the four needs at the top for utilities, mortgage or rent, household items, or food drove patients' desire for outreach.

Likewise, having two or more of any of the 10 needs listed also drove a desire for outreach. And here's just a sample of the data we track in terms of screening activity with staff. Even after the research study stopped, staff maintained screening and the health system saw the service as valuable. However, there was and continues to be a great amount of variability between individual staff in terms of engagement and screening.

But this is why we continue doing this work. Here's one example of notes summarized from our 211 Resource Specialist. In her initial call, the Resource Specialist wrote, I spoke with a patient's daughter who mentioned her mother needs help with meals and transportation to appointments when she's not available.

I provided a list of resources and will follow up next week. And two weeks later, she wrote, I spoke with a patient. She mentioned she nor her husband speak English or drive. She's 83 years old and had heart surgery 17 days ago, has MS and arthritis. Her husband is 92, diabetic and on oxygen.

She said her daughter helps but is busy juggling school and work. Because of the busy schedule, her daughter wasn't able to connect with any resources. I was able to connect the patient with aging and adult services and help translate, as well as help complete the phone application for Meals on Wheels and write for Wellness Program. Starting next week, patient and her husband will receive meals Monday through Friday and will receive services for transportation and medical appointments.

This is why we do this work. So from the numbers, we can screen and make connections to a quick and cost-effective way with existing low-cost resources. But in routine care, we had lower-than-anticipated screening and we have clear drops, what we now call voltage drops, between those with needs and those who ultimately get help. So now, what did all the qualitative data from staff and patients tell us about where to go if we want social needs screening to be effective? Despite scripting and ongoing training in interviews and observation, we found a lot of discomfort from staff about asking what they viewed as stigmatizing questions.

And we found that staff profiled patients. They would determine who to screen based on clothing, insurance or listed employment. And without going into as much detail as the paper cited here, of course, we really found that self-determination theory applied well here. The staff who saw this as a tool for engaging with patients and felt that they had an important role in their larger clinical team were those who were more inclined to screen patients for social needs.

Meanwhile, from patient focus groups, they understood that healthcare providers may not be able to address all their needs. And while they would prefer nurses to do the screening, they are open to screening being done by other team members, as long as it's done with sincerity and is done universally. They don't want to be singled out and asked these questions. They also recommend that questions be accompanied with a clear explanation for why they're being asked, even before they're asked.

And something that we didn't fully expect. Patients strongly communicated they do not want this information to be in their EHR for fear of being permanently labeled and treated differently by healthcare providers. They voiced serious concerns about their privacy and potential stigma. So as we continue exploring all these tools to make it easy to document in clinical settings, we need to better understand how to make these efforts patient-centered and reduce the risk of reinforcing health inequities.

And we continue to do more work here. An example is that our data show that it seems important to focus on multiple needs versus one need, in this case food insecurity. The analysis also demonstrated how screening data can be linked to community resources, in this case food pantries, throughout the state of Utah.

And in this analysis, we show there are likely language-based differences in screening and referrals. In fact, this justifies our inclusion of a question do not wish to respond option to each question as Spanish speakers, while on average report more needs, are also less willing to disclose those needs. In our latest work, we've demonstrated that how we do screening results in differences in desire for outreach.

Unsurprisingly, those who interact electronically via MyChart are very different than those who screen while on the ED. So we need to carefully consider how and where we screen and how that may reinforce inequities. And finally, what these graphs show, which are under review now, is that having a personal phone number is important to receiving help, and that not having your own phone number is tied to needs for housing and transportation. These findings feel like common sense, but in this sample, 7% of our patients screened may have no way of communicating with the health system, even by phone, much less by text messages or through an EHR portal. So this is a cautionary tale about how patients continue to be left behind if we rely solely on EHR to do outreach.

So then, what are we doing now with this? With the launch of our latest study in November 2021, our screening takes place in the Adult and Pediatric Emergency Department's Mobile Health Outreach Clinic, and because of our NIH funding, is in part funded by a COVID-focused initiative after COVID testing. COVID-tested patients are sent the Sincere Social Needs Screener BMI chart message. This is in contrast to screening methods in other sites, which is done in person by staff, research associates, and volunteers. After screening, patients are asked whether they would like outreach by a 211 resource specialist for free and low-cost referrals. The name, contact information, and social needs have been shared with 211, who attempt to contact patients within 48 hours of referral. To test whether we can improve outreach success, patients are randomized to one of three groups.

To usual care involving multiple referrals and follow-up conducted only as needed, to attention control involving multiple referrals and follow-up every two weeks for three months, or to collaborative goal-setting involving prioritization, troubleshooting, action planning, and follow-up within 72 hours, and then every two weeks after three months, or for three months, rather. Patients with a 211 interaction are then asked if they wish to complete surveys to better understand community service use and to enroll in our ongoing study. And as you can see here, in addition to tracking outreach and connections made, a range of six-month general health and COVID-specific outcomes are then tracked for those who enroll in the study. So, in sum, our current work, we are focusing on expanding referrals to understand how to best reach those impacted by COVID-19 and now in general health populations.

We are testing methods for improving engagement and connections centered on patient problem-solving and self-efficacy. We are measuring patient- centered outcomes over time versus focusing exclusively on health service utilization. And along the way, we are targeting motivation and competence of staff engaged in screening.

So, I know this has been a lot to take in today and that this work has many components that are ongoing, but the key takeaways is we have readily available cost-effective tools universally screened for social needs in our healthcare systems. Our approach is one of many approaches that can be used, but our research findings uncovered some critical caveats that suggest that addressing social needs in clinical settings needs to consider staff and patient beliefs, relationships, and systematic barriers if it is to be a solution for addressing health inequities. Thank you for listening today, and again, we hope this gives attendees some useful information about screening to consider in their own work.

And, of course, here's my e-mail if you have any questions that don't get addressed today, and thanks again. >> So, thank you very, very much, Dr. Wallace. As a reminder for the audience, we'll be taking questions after all presentations, so please submit any questions you have right now into the Q&A panel. Let's move on to our second webinar presentation, which is being led by Dr. Brian Jack. Dr. Jack is a professor

and former chair of the Department of Family Medicine, Boston University School of Medicine. His research team developed the Re-Engineered Discharge Program, now used in hospitals around the world. He has received the Peter Drucker Award for Nonprofit Innovation, the Centers for Disease Control and Prevention External Partner of the Year Award, and the AHRQ Award for grantees whose work has led to significant changes in healthcare policy. He's also a member of the National Academy of Medicine. And now, allow me to turn the control over to Dr. Jack.

Thank you. >> Thank you, Chris, very, very much. It's really a pleasure to be here with you today. Okay, so today I will discuss a health information technology system we designed over the past ten years to deliver preconception care for young African American women using conversational agent technology. It's been a long-going project, funded with six or seven different grants from different sources, especially from AHRQ.

We're greatly appreciative to them. Our motivation for this work is shown here, and that there is an unacceptable disparity in maternal and child health outcomes between African American and white women. As you can see, there's data for low birth weight, preterm birth, infant mortality, and maternal mortality rates by white women, Hispanic women, and African American for each of those birth outcomes.

And you can see graphically that this is one of the most despicable disparities of them all, and that we have to do something about it, and we've done nearly enough to do that. Okay. And we have taken a primary prevention approach to improving birth outcomes for African American women, that is to identify health risks prior to pregnancy and introduce interventions to mitigate those risks. As the CDC has defined preconception care as the medical care of a woman or a man that focuses on parts of health that have been shown to increase the chance of having a healthy baby. And risks are very, very common, and you can see here that the percent prior to pregnancy who smoke, who consume alcohol, medical conditions, seronegative, HIV/AIDS, prenatal care, various medical comorbidities, taking teratogenic drugs, overweight or obese, not taking folic acid, is really profound. And to identify these risks prior to pregnancy makes a lot more sense than identifying these risks at the beginning of pregnancy so that something can be done prior to pregnancy to improve outcomes.

So there is a need for efficient ways to assess a woman's preconception risks in order to prioritize valuable appointment time with providers and to support the woman in taking action to minimize her risks. As part of the work of the CDC Select Panel on the Content of Preconception Care, the clinical work group that I chaired identified over 100 clinical conditions which, if identified and addressed before pregnancy, could improve pregnancy outcomes. This work was published as 13 articles in the American Journal of OB-GYN that correspond to the domains and individual risks that are shown here.

The Gabby system that we're going to talk about in a minute identifies each of these domains and each of these individual risks in a risk assessment tool that was developed as part of this work. So the question is, can health information technology help to screen for and to identify preconception risks and to provide interventions appropriate for each of those risks? So we have worked to develop Gabby with my colleague Tim Bickmore, who really was one of the moving forces in conversational agent technology over the past 15 years, who is now at Northeastern University. So Gabby is an animated conversational agent with tailored dialogue, tailored meaning specific to the individual risks for that woman, and the ongoing unfolding dialogue that Gabby presents about that risk. The Gabby screens for over 100 preconception risks, provides 12 months of evidence-based health education tailored to a woman's individual risks, provides behavioral change counseling based on what the patient is ready for, and measures progress and provides feedback about those risks over 12 months. We did a great deal of qualitative research to identify what the character really ought to look like using focus groups, key informant interviews, patient advisory groups, and usability testing conducted with over 100 African-American women ages 15 to 34, which is our target group, over the past 10 years.

And using this information with suggestions for a design of what the character's name is, what the character looks like, what the script content is, what are the backstories of the character and stories that the character tells in the unfolding dialogue, various social networking tools that we have implemented, and the visual layout of what the interface looks like. A typical Gabby system interaction looks like this. So the patient will meet Gabby, take the health survey to identify which of those 100 things are worth talking about, which the patient is at risk for, screens positive for. Gabby then reviews what we call the My Health To-Do List, which is the list of things that the woman, upon her choice, can decide what to address and what not to address, and can choose the topic to learn about with Gabby. And Gabby doesn't force anything on them, but they can choose when they're ready to talk about each of the individual risks. Identify the trans-theoretical model stage of change.

We'll talk about that in a moment, to identify whether or not they're pre-contemplative, in which case they will receive motivational interviewing dialogue, or they're contemplative, in which case they'll receive shared decision-making dialogue, or if they're the action and maintenance phase, in which case they'll receive problem-solving tips, homework, and goal setting. The user then decides to learn more about that topic. Gabby provides up to 12 months of health education dialogue, and then achieve the goal. And the goal that we have achieved is reaching action or maintenance in the trans-theoretical model for the randomized trial that we'll talk about in a moment. If they achieve the goal or if they decide to move on to learn about a different risk, then they go back and choose from the other list of risks that were identified on the risk assessment.

Gabby also organizes the risks identified so that the user can keep track of their progress. And there are features such as the Gabby blog, glossary, health websites that they can identify. They can browse various health topics. There's a personalized color scheme in quotes. They can produce their own personalized reproductive life plan about what their goals are relative to reproduction and set their own goals.

In addition to identifying the risks in terms of living well and staying well that have been identified and tracking them over time so the woman can keep track of how well she is progressing through the list of risks in terms of resolving them or mitigating them. The trans-theoretical model was the outcome of our randomized controlled trial, and it is pre-contemplation, which is not intending to take action in the next six months. Contemplation, intending to take action in the next six months. Preparation or planning, ready to take action in the next six months.

Having made lifestyle changes in the last six months or doing a new behavior for six months, which is maintenance. So that behavior change is a process, not an event. And breaking down into the various levels of the trans-theoretical model has been very useful for us. I just want to show quickly what a typical interaction could look like, showing Gabby providing motivational interviewing for a woman. Specifically, this conversation is tailored in the dialogue for a woman who is sexually active, not desiring pregnancy, and not using birth control.

So she's pre-contemplative for not using birth control because she's sexually active and not desiring pregnancy, but not using birth control. This is what Gabby would do. >> The first step to good preconception health is being able to control when you get pregnant. Is it okay if we talk for a few minutes about planning ahead for pregnancy? I understand that this can be hard to talk about, and it is up to you. But this is a topic that women often feel torn about, and sometimes it can be helpful to talk about it.

It will only take a few minutes. How would you feel if you got pregnant right now? I understand that you probably have a lot of other things going on, so thinking about pregnancy might not be at the top of your list. But if you could choose to be pregnant right now or not, what would you prefer? It sounds like you have some concerns about getting pregnant. Could you tell me more about that? So it sounds like you're concerned that a baby would get in the way of doing things you want to do, but you mentioned earlier that you're not using birth control regularly. Can you tell me more about that? Tell me more.

Why do you think you cannot get pregnant? It is true that an irregular period, or a period that does not come at all, could make it harder for you to get pregnant. But it does not make it impossible. Your body could release an egg at any time, and that means if you have sex, you could get pregnant.

And did you know that half of all pregnancies are surprises? Many of those are to women who did not think they could get pregnant. And you have said that you do not want to be pregnant right now. How about if we talk about some effective ways to prevent pregnancy? >> Sure. So the woman responds, sure.

So in this short conversation, the woman has gone from pre-contemplation, meaning that she did not want to talk about family planning methods to prevent pregnancy, in a short conversation saying, sure, let's talk about that. So I'm going from pre-contemplation to contemplation. When the system was developed, we conducted a randomized controlled trial that was published in the Lancet Digital Health a year or so ago. We randomized 528 African-American women from around the United States enrolled online, who self-identified as African-American or black, were women ages 18 to 39, not pregnant, and had telephone or computer Internet access. The control group received a letter listing the risks, so they had the same risk assessment.

The risks were identified, and they were told to speak with their doctor or clinician about addressing those risks. Or the intervention group, which was Gabby, who intervened on all the risks for a period of 12 months. The primary outcome was reaching action or maintenance, meaning it was resolved or a problem that had been addressed and resolved during the intervention period.

Our outcomes show that we recruited, we were successful in recruiting 528 African-American black women from across the U.S., from 35 states and 242 different cities. Characteristics of the samples showed that we recruited women with higher levels of education, higher peer literacy, and higher health literacy than was expected.

We used Gabby. Use of Gabby resulted in a 16% increase in the reported rate of primary care risks being addressed compared to the control and reaching action or maintenance. And the data is here for six months and here for 12 months. So at 12 months, the 16% increase had maintained itself over the 12-month period in terms of maintaining the success that we had in resolving risks.

After six months, two-thirds of the participants report they used information from Gabby to improve their health. Another 20% plan to in the future. Looking at the data specifically by health domain, what we can see, if we look at nutrition, for example, so this is where people start, both in the blue is the intervention group, the red is the control group. We can see the intervention group moves further along on average for women who have risks in those areas. All the domains made progress except for substance abuse, which did not make progress.

Overall, the domains literally moved from contemplation to preparation on average across all risks. Participants told us the nurse or doctor they tell you, but like how they say it. They said it in different ways, but how Gabby said it, she actually said something that I actually understood. It's like it seems like she's not going to judge you if like there are things you did or something.

Sometimes the doctor is really busy. They might not have the time to answer or the patience to talk with you about those issues. So in that way, Gabby is better. With those outcomes, we have adapted the Gabby system for use in the low-middle income country in southern Africa of Lesotho, which has high HIV and TB rates. We culturally adapted the system, clinically adapted it, and technologically adapted it to use cell phones, mobile phones in Lesotho. The idea there is that we can assist overworked health workforce to deliver health education.

On the left, you can see a picture, a real picture, from a typical day in the outpatient department with this long, long, long lines of patients who need to be seen where there's enormous amounts of health workforce need in Lesotho. And that we've, I think, begun the journey to leapfrog from the idea of delivering face-to-face health education in a situation like this in OPD is really not going to happen. But to leapfrog right to health education on mobile phones in Lesotho is a real possibility in the future.

Our early data show that women who have used the system for, I guess it's four to six weeks, in terms of it helped me make decisions, it could quickly improve health education in Lesotho, I intend to continue using it, I want to encourage others to use it, it's culturally appropriate, it's kind of off the scale in terms of agreeing or strongly agreeing with those statements. We have also now begun to develop Gabe, another conversational agent character for young men, young African-American men specifically. The content, though, is really very different. It does include medical conditions and risks, but much more on emotional health, nutrition, sleep, exercise, housing, employment, education, and a lot of the social determinants, criminal justice, healthy relationships, discrimination and resilience, family planning, and adverse childhood events, et cetera. So in conclusion, we believe that Gabby is an advanced, comprehensive, and tested patient-facing health IT system that is widely scalable.

Tailoring and engagement in health behavior change dialogue are game changers, and it can be used as an adjunct to clinical care, that is, to assist a clinician in the office to deliver health education on these things and to monitor progress that Gabby makes, or as a population health tool at the health system level, and can, we believe, be used in under-resourced settings around the world. It's clear that young consumers want to use these new technologies. And we believe that the focus on our system and in our work is on implementation of the system.

So thank you very much. It's really been a pleasure, and we hope that there's time for questions at the end. >> Thank you so much, Dr. Jack.

And for our audience, again, please feel free to submit any questions you have using the Q&A panel at this time. So let's move on now to our final presentation of this webinar, which is being led by Dr. Peter Yellowlees. Dr. Yellowlees is the Distinguished Emeritus Professor of Psychiatry at the University of California, Davis, where he directs the Fellowship Program in Clinician Well-Being.

He is also CEO of Asynchealth, Incorporated, a telemedicine company he co-founded. He has written over 250 academic papers and book chapters and seven books, as well as over 180 video editorials on psychiatry for Medscape. He is regularly invited to present lectures nationally and internationally and is often featured in the media. And now I'd like to turn the control over to Dr. Yellowlees. Thank you. >> Thank you very much indeed, Chris.

It's a real pleasure to be here, and thanks to everyone who's logged in and who's listening. I'm going to start with a little bit of context. I'm talking about automated interpretation of language in both medical and psychiatric consultations today, and I'm going to really review just how accurate are some of these systems that we've been using here at UC Davis. But this all goes back about 20 years, when we first started seeing patients in the Central Valley of California using videoconferencing, so ordinary telepsychiatry. And we found that whilst we saw many patients without any problems, the Hispanic typical field worker population were actually very difficult to reach.

They frequently had poor English, and so we had to use interpreters. At this time, interpreters were not readily available. And generally, these interpreters were either family members, often children, or some of the nursing staff in the clinics. But either way, they weren't very satisfactory.

The alternative was that the patients just simply didn't turn up for their psychiatric assessments, and that's because they couldn't afford to take a half day off from work to come and have an extra medical consultation. So we started the process of asynchronous telepsychiatry at that stage where we started literally recording videos of the patients rather than when they came in to see their primary care physician. And that saved them a full day off work to have an extra consultation. We then sent those video recordings to our psychiatrists who looked at them, reviewed the electronic records, and wrote consultation notes that the primary care physicians could follow up with the patients. Now, having been doing that for a number of years, we thought that it might be interesting to see if we could use the asynchronous nature of the Internet to add value to those consultations. In other words, we could do the consultations in the patient's own language, typically Spanish, and then have those Spanish videos automatically interpreted using a number of different interpreting tools that were becoming available about a decade ago.

And that led us to applying to AHRQ for this particular grant. Now, if I move on. So what was the aim of this grant? First of all, we wanted to build an automated asynchronous interpretation tool, and we did that in the first year. We wanted then to take that tool to compare the interview and language interpretation quality and accuracy of automated translation compared with our gold standard of interpreted translation. The next aim was to compare the patient satisfaction with automated translation compared with working with an interpreter and a psychiatrist, and finally to compare the diagnostic accuracy of those two methods. And I want to acknowledge the many team members at UC Davis that have been involved in this, some of whose names are down at the bottom of this slide.

So what are the learning objectives of this particular presentation? There are really three main ones. First of all, we've shown that automated transcription and translation of asynchronous telepsychiatry interviews is absolutely feasible and is something that can be done. Secondly, we have really come to the conclusion that currently automated translation of language accuracy for simple words is sufficient for general medical interviews but probably not for psychiatric interviews where more sophisticated language is often used. And finally, we have found very clearly that patients much prefer being interviewed in their own language without an interpreter, but that interpreters themselves are actually more accurate when using video conferencing compared with in-person interviews. And we actually ended up this trial seeing about 30 patients instead of in person using Zoom as a result of the pandemic, and this was an interesting incidental finding.

So for more context and background, we know that patients with limited English proficiency receive substandard health care. There's a huge amount of information about that as reflected by the previous speakers. And we also know that medical interpreters are often in short supply, and also their quality is completely unknown and has really never actually been properly assessed. So we saw automated translation of recorded interviews as being a possible solution. Now, this is what asynchronous telepsychiatry is. Essentially, if you look at the -- we're comparing it with synchronous telepsychiatry, which is just basically video conferencing directly with the patient wherever they happen to be.

In asynchronous telepsychiatry, a provider refers a patient, a video of the patient, with an interviewer who's typically a non-medical interviewer, is recorded, and that is then sent to a psychiatrist, as you can see in the bottom right-hand corner of the slide, who reviews that interview, looks at any other EMR data or any other data they've got about the patient, and literally writes a treatment plan and recommendations for the primary care physician that the primary care physician can then follow up. So this is a form of collaborative care, but it's really becoming the gold standard for much of mental health care nowadays. This is what we built, obviously, without any faces being able to be seen, but we built a nice, sophisticated app that allowed us to upload videos and also make diagnoses, write notes, and send information back to primary care physicians.

So we then started doing the studies. The first study we had to do was actually very basic, just to look at language translation accuracy. We had to make decisions as to which translation tools to use, and we had to use HIPAA compliance rules. So that actually ruled out most of the translation tools. And in the end, we really had to make choices between Microsoft and Google, and so we used those particular companies' transcription and translation engines.

In this first study, we actually didn't use patients because we were looking at a fairly wide range of different technologies, and we obviously had to keep to HIPAA. So we recorded several fictional interviews. These were uploaded to our app, and we then went through a process of counting the errors for both the transcription of Spanish language into Spanish writing, and then from Spanish writing into English writing, and obviously the translation component of that. And there are clear, well-described methodologies in the literature to look at how to calculate word error rates and the accuracy of the different transcripts. So we went through with initially these fairly simple language interviews. This is the math.

I won't go into great detail about this, but it's well worked out, and we can calculate essentially the word error rate and the accuracy rate of the actual language transcription and then translation. Now, what were the results of this? If you look on the left-hand side here, these are just taken from two interviews. If you look at the interview one, top left, you'll see up here essentially that the lowest score, the word error rate, is a good score. Google was beating Microsoft clearly on that one, as they did also on interview two.

So you can see the word error rate is 0.07 on interview two and 0.19 on interview one. And these are perfectly acceptable word error rates. Then you go to the second level of actually translating that transcripted language, and here a high score is good. So here the Google accuracy was 0.84 and then 0.95 for

these two particular interviews. And so this basically said to us that certainly Google was more accurate than the Microsoft version and accurate enough to meet the general industry standards, which were about a 10% error rate overall. Now, we then moved on to look at a much more difficult, I guess, situation, which is the actual genuine psychiatric interview. We designed a randomized controlled trial where method A was our gold standard, the patient being seen with an interpreter and a psychiatrist, so the patient speaking Spanish, the interpreter translating that for the psychiatrist. And method B, the experimental one, was where we recorded the patient and then used an automated translation system.

All patients were phone screened, gave verbal consent. We got patients referred from primary care clinics and paid them a small amount for their time at a level that was thought to be non-coercive. Over about a three-year period, we saw 114 patients. Seventeen were screened to have mainly psychiatric disorders and 40 chronic medical disorders.

And all of them came into our clinic for essentially a four-hour clinic visit where their interviews were randomly ordered, so method A versus method B first. They all had the research SCID, which is the psychiatric gold standard for diagnosing patients, considerable amounts of satisfaction data about each of the two methods, including having to make forced choice preferences between the two methods. And then we also gave all of the patients their notes so they could take them to their primary care physicians and follow up as necessary. So this was a comprehensive RCT. We had a lot of lessons learned from this.

First of all, it was extremely difficult to actually recruit a lot of these patients. And we went through all sorts of different approaches and finally employed a Spanish-speaking research assistant who had a prior background of working in essentially call centers and was very used to doing cold calls to patients. And she was actually very impressive and managed to recruit much better than our previous approaches. We also found that, not to our surprise, but this confirmed what we expected, that a lot of the patients had major trauma histories, which made them very sensitive to these consultations. And we actually ended up on several occasions with interpreters being in tears, hearing the stories that were coming out. And then obviously COVID was a challenge, although it ultimately turned out to be helpful.

And then finally, quite a few of the patients who said that they had medical problems actually also had psychiatric problems. So there was a lot of extra morbidity. So, again, we predicted, but we certainly saw. Now, we've actually published this, the results I'm about to show you here in JMIR. So you can look that up if you just put my name into PubMed. And what we were doing here was examining the hard part of language, what's called figurative languages devices.

In other words, the use of metaphors and similes and idiomatic expressions that come up in psychiatric interviews much more than in general medical interviews. And you can see here some examples of that. So, for instance, a metaphor, you can see that it's written in Spanish. The correct translation in English is, this is overwhelmingly, but actually the literal translation in English is, this is filling my brain.

So you can understand how with an automated system that's much more difficult for the automated system to translate correctly. And you can see several other copies of these. And, as I say, the slides are all going to be available, so you can look at more details of difficult language that is commonly used in psychiatric interviews by people to explain how they feel. Now, what did we find? Well, first of all, we found many more of the figurative language devices used per minute of an interview with the asynchronous telepsychiatry. In other words, when patients were being interviewed in their own language. That's not surprising, but what it means is that we actually had much better levels of language, much more detailed language, where we were interviewing patients in their own language and recording that interview.

And I think it's actually well described in the literature that patients themselves, when they're working with interpreters, often use a fairly sort of pidgin form of their own language, which doesn't give the interpreter perhaps enough of a feel for what's actually going on with them. So we certainly saw that. We saw that people spoke much more freely in their own language when being video recorded, many more words per minute. We actually found, not surprisingly, that the interpreters actually more accurately translated these figurative language devices. So, again, we weren't surprised by that.

And then if you actually did Zoom rather than in person, the interpreters became even more accurate. And so actually we found that the interpreters provided a better level of interpretation online than they did in person. Now what about preferences? Well, interestingly, the patients actually overall preferred to have the interviews done in their own language by a non-psychiatrist and then with that being recorded. So having said that, there was a group of about half the patients had no overall preference. Certainly 75% of the patients were comfortable with being video recorded for this interview. And about 25% to 30% would have preferred to have a traditional synchronous interview directly.

So what are the implications here for cross-language health interviews in the future? There's a lot. First of all, patients prefer being interviewed in their own language and give a much more detailed and more descriptive medical and psychiatric history. Secondly, the current gold standard is in-person interpreters or online interpreters. And this should probably change to increasingly use of video interpretation rather than in-person interpretation.

We found that current transcription and translation systems are probably adequate for simple medical interviews, hence the first trial, but not yet ready for complicated psychiatric interviews where there are a lot of figurative language devices used. But obviously we know that automated language systems are constantly improving with machine learning. And I would bet that they will ultimately become the gold standard for interpreting done via asynchronous or synchronous methods. So our conclusions from the trial, going back to our learning objectives originally, first of all, asynchronous telepsychiatry and automated translation is absolutely feasible.

When using medical interpreters for long interviews, patients often speak in this pidgin language, and that's often encouraged by the interpreters using less words and less complicated language than when speaking to a natural language speaker. And I think as clinicians we all have to be very available of that fact. Thirdly, we found interpreters are more accurate on virtual consults than they are in person, which is absolutely fascinating.

But there was no question about that. Fourthly, in terms of the actual translation systems we used, we found Google Translate to be more accurate and was probably sufficient for simple interviews. Now, this study, the actual data collection was between sort of two and a half and four years ago.

So these systems have greatly improved since then. We found that interpreters are slightly more accurate at figurative language device translation than the automated systems. And undoubtedly, patients overall preferred the asynchronous consults in their own language with video recording to using interpreters. So we have a lot more data to analyze, and we're still partway through this data analysis.

The study actually only officially finished about five months ago. And we look forward to more publications and more results. And I hope this has been of interest and look forward to any questions that you might have.

>> So thank you, Dr. Yellowlees, and thank you again to all of our presenters for their very informative presentations. So this concludes the content portion of the webinar. Now we have a few minutes left for questions.

You can type them into the Q&A section of the WebEx portal as depicted on this slide. Although we may not be able to get to them all today, we'll provide responses to all questions in writing. You'll receive an e-mail once these responses are available. Now, as you're thinking about your questions to submit, I have a few starter questions for our panel. So let's start with Dr. Wallace. So, Andrea, you mentioned staff reluctance to screen.

Can you talk more about strategies that health systems might use to help with this? >> So this is something we continue to work on with our own staff here at the University of Utah. I mean, there's a lot of more traditional sort of implementation approaches. One is giving feedback about, you know, what they've done. You know, patients' stories have been pretty effective in terms of saying this screening that you took time to do actually resulted in this for the patient.

So we're finding that that's, you know, well received in general. But there's something to creating connections between the staff and those who are doing the outreach work on sort of a collective team. And a lot of health systems are really wrestling with this because these are a lot of new roles being created and really trying to, you know, kind of creating an esprit de corps, as it were, you know, sort of a group that's really in it together. So we're trying to do more of that in addition to the general, you know, usual run charts and reporting back about sort of, you know, to their managers and things like that, really trying to engage them. But, yeah, that's a bit of it. >> One more question for you, Andrea.

Given that staff are pressed for time, have you looked into paring down the four top screening questions that determine the desire for follow-up? >> So this is a really common question that a lot of health systems are really trying to wrestle with because, you know, we have limited time. So it's like how few questions can we ask before we can move on with this? The challenge here is that what we're finding is that you have to ask a certain amount of questions to be able to allow patients to even understand what we're talking about. Because when we're talking about social needs, it's not just money.

It's about social support, as I mentioned. We ask about child care and elder care as part of our screening, which can affect anyone. Transportation barriers can affect anyone for any number of reasons. So we're really trying to expand the conversation beyond just do you have money to pay for medications or for food? It's more expansive than that. So we find that a sweet spot are those ten questions, and it does take only a minute. So everyone is trying to pare it down.

>> Right. You know, understood. Now a couple of questions have come in for Dr. Jack. So, Brian, do you think large language models like ChatGPT have a potential role for conversational agents? >> Yeah. Thanks, Chris. And thanks for ever asking that question. It's a good one. Yeah, you know, I think that, you know, things are moving so quickly in all these areas and the sky is the limit. And I think the short answer is yes.

And I think that the opportunity to use all these systems, you know, within the structures of, you know, clinical medicine is really profound. You know, integration into EMRs, integration into patient portals, and, you know, also in terms of directly, you know, assisting clinicians in delivering, you know, clinical content. So in the situation of Gabby, for example, it could be that Gabby could do the risk assessment and begin the conversation at home. Or maybe even to start with, the clinician could just prescribe Gabby for a patient and not take all the time necessary to do the risk assessment while in the office visit.

The patient could go home, meet Gabby, do the risk assessment, begin the conversation, and print out the My Health To-Do List, which is the list of risks that they have, and then begin conversations with Gabby about each of those risks, and then come back, talk with the clinician about, you know, this is what Gabby has told me, what do you think about these sorts of things? So it can be in conjunction with clinical care. It could also be, I think, as this question is getting at, is that, you know, could it be incorporated into, you know, patient portals or some other population health tools that big health systems are using increasingly. And we believe that our data actually shows that if a large health system identified women between 18 and 35 in the target user group that we have identified that it's successful with, and delivered, you know, Gabby as a tool to everyone in their health system to use Gabby at home by yourself, that over time, that health risks will be identified and addressed, and the population as a whole will get healthier. You know, I think our data supports that. And if not, things are moving in that direction. Another possibility is, you know, embedding conversational agents or chatbot technologies, a variety of different technologies now, into patient portals where, for example, to talk about, you know, the shared decision making around, you know, preventive health services around colonoscopies and PSAs and mammograms and sort of a cancer screening, et cetera, et cetera, to save the clinicians time, which is also becoming a very important, you know, outcome variable for all these systems as health workforce challenges are becoming more profound.

So I think the answer is yes to the question, and there's lots of exciting ways in the future about how exactly that's going to happen. >> Thank you. More research for AHRQ to fund, right? >> There you go. Yeah, and thanks to AHRQ for doing it.

>> So we also had a follow-on question about your presentation, Brian. Have you explored post-birth interventions? The asker of this question says that this person's unde

2023-03-14 09:44