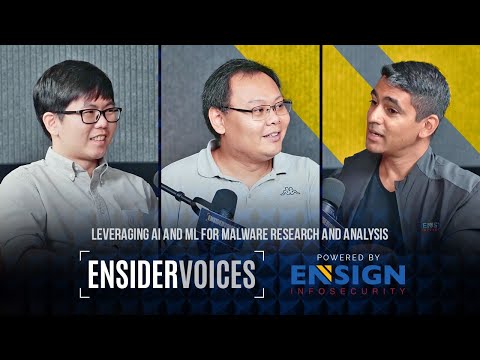

Leveraging AI and ML for Malware Research and Analysis - EnsiderVoices Episode 1

Hi everyone, I'm Gaurav and I'll be your moderator for today. I'm the Head of Advisory and Emerging Business here at Ensign InfoSecurity. In my advisory role, I help my clients navigate digital risks of all sorts and we're going to get into one of those digital risks today. The topic for today is leveraging AI and ML for malware research and analysis and I've got two of my colleagues with me today - Joon Sern and De Sheng, so tell us a little bit about yourself and what you do. Hello, I'm Joon Sern. I'm a Director of Machine Learning and Cloud Research at

Ensign Labs. So what we do is that we actually try to develop our own proprietary AI and machine learning algorithms to help our analysts - they may be SOC analysts, they may be malware analysts - to be more efficient in their work. So we employ some of these AI techniques to help them. Now, it's not just to actually write a paper or to come up with some research we actually have to deploy it and therefore that's why we are also moving towards the cloud to actually push out some of these new technologies very quickly to our clients and also to our users.

Awesome. I want to ask De Sheng to introduce himself very quickly and tell us what he does. Hello everyone, I'm De Sheng. I am with the malware research team so we work together with the ML Ops teams and the ML team to actually look at how we can do further research on malware, right, and to look at how we can extract out TTPs and IOCs from malware so that we can better detect them. How do you think about doing malware research differently and how does machine learning and artificial intelligence help make the process either better, faster or do the work differently? So what we're trying to do is to actually help De Sheng in his work. The first thing we're trying to do is to actually classify the malware families; so given a particular malware binary can we tell what is a family because if he knows a family, he roughly knows what that malware is trying to do and it's objective. So what we have done is actually to take some of

these malware binaries; convert it to an image. So actually malware binaries are actually a string of bites. Conventional ways of doing this is actually to hash this so the string of bites can be hashed; can be turned into a hash and then you just do a hash comparison. However, we find that is actually quite rudimentary because a small change or just changing the packer may actually change the hash significantly, right, or just changing the string or just changing the code slightly will change the whole compiling and the hash so that is where we actually try to convert it to an image and then we apply deep-learning algorithms on it; so there have been a lot of breakthroughs regarding image analytics recently before the recent LLM explosion. So before that was actually image, right?

All the stable diffusion and image classification...so we employed some of those techniques to actually try to classify whether that binary is any of the families that we have known. Now, the main problem with this is that when we work together, we realize that the data set is not static. We used to have 30, 60 families, right? Now he has upped- he has increased the number of families to 300 over families. So, how do we handle an ever-increasing number? Why is that an explosion? Is it because there are more attackers out there or is it just because the malwares have started to mutate and become part of different families? What's driven this explosion in numbers? I think it's a combination, right, and we are grabbing the samples from multiple sources as well, so maybe that will also add up to the explosion of the things that we are seeing right now. So people are remixing and building off of each other as well. I want to read back what you said before you get to the interaction between the two of you. So you said that you're

using the previous method of doing things using hashes, which is kind of like a string that's generated from the code; wasn't useful because a small change in the code means a large change in the hash - that's just how hashes work, so using imaging techniques and just to make sure I understand this, you're using the techniques that we've seen: is this a picture of a cat? Is this a picture of a dog? And the artificial intelligence is able to tell: "Yes this is a picture of a cat, this is a picture of a dog", but you're doing that on binary blobs to tell whether this is the same family as the other family; so basically is this malware family the cat family or the dog family. Yes, correct. So that's what we are doing but we are not just doing a simple classification. So because we know that the number of families will increase over time, it's more of a image recognition technique; so you can think of it as facial recognition when you enter a building or your employers in your employment, right? So what happens is that typical way is that when you go in on your first day, they'll take a few pictures of you. They will not train the underlying algorithm on your face but they will use your face in the database, and then if you enter the next day and it looks similar to your face that they have in the database, then they will recognize it and then they will let you in.

So it's more of a scalable way to approach this problem, whereby because of the ever-increasing number of families, we actually have to keep the model constant. But he has to classify more and more people. And that facial recognition example or analogy is a very interesting one because as long as your face is in the database, even if one day you forget to shave or you decide to wear glasses to work, it still works because it recognizes that you're similar enough to that original image, even though something's a little bit different. Is it the same for malware? So if people start to mutate a little bit of the code here and there; they use a different packer; does the image look similar enough for you to be able to classify it? Yes, so that's actually the finding that we had. We found that when they changed the packer,

it didn't affect the malware image significantly. I mean, it did change quite a bit, but not to the extent that you will break the model. Of course, there are some limitations. When the binary is encrypted, then the whole image becomes homogeneous; everything is...

the entropy is very high. So then, it doesn't work on this kind of used cases. But then, do you have an ability to interact between the two of you: so if the binary is encrypted, are you able to provide him a different version of that same sample to be able to unpack it or does that lose the value then? So yeah, if he's encrypted, typically my team will try to decrypt it, right? So once we decrypt it, then it will just be another, you know, very open malware sample, then we will pass it back to them so that they can do further training on it. So your research helps train your model and your model helps him classify the families better. So how does that workflow then kind of work between the two of you? Maybe I'll ask you first. So, most of the samples are actually provided by him, so he'll have to actually curate the data set and make sure it's clean; he has to make sure that there's no encrypted data within the data set that we used to train. Otherwise, we will mess up our whole training.

So what we have done, is actually to leverage some of the cloud for this; so we are not going to host malware samples on PRAM, so we put some of these on S3 buckets - of course defanged. But then, we still don't want to put it on PRAM - so when we say defanged, it's maybe a dictionary- a NumPy dictionary. Okay, a dictionary of NumPy arrays. So, these are stored on the cloud. We actually have an instance on the cloud that will speed up every two weeks; so it's Kubernetes-based. You will read all of his samples and then retrain the model on AWS; in this case, we use elastic Kubernetes service. So this pot in the

Kubernetes service will actually run and it will train based on all the samples that we have, and then it will put the binary in the S3 bucket, and then when he wants to use the model, he will...Descript will always pull the latest model from the S3 buckets, so it's actually quite contactless because it is automated, right? The training is done; it puts into the S3 bucket; he then pulls the latest one from the S3 bucket. And it sounds quite scalable as well, because then you don't have to build an entirely new model each time you discover a slightly different variation or a slightly different malware family. Any fascinating insights that you've discovered in this process of doing this very unusual way of trying to classify families: something that surprised you or surprised the community at large? I think what we found was that the current model - it works very well on the data that you have seen, even though its size is the same. So for example, the current model is about 300 megabytes; even though he has seen 300 over families, right, he's still able to perform well on those samples that he has seen. What this means is that we may no longer need a

lookup table or to host a huge database of malwares, and then to do a manual search of whether this particular binary is similar to any of them because the model can already do it so this information is actually saved into your model; it's like your LLMs, right? They save all of the information on whatever they have trained into 10 GB of memory and that's it. Now, increasingly, what we have found is that some of the latest samples may not work too well. Actually, I should let De Sheng explain some of this. Yeah, please explain.

Yeah, what we found is that some of the samples don't work too well because of the way that the malware authors are actually trying to evade this kind of detection, right, because they also understand that there are also heuristics involved when it comes to detection of malware. What happens is that they'll try to make their malware look more similar to, like, a B9 program and things like that; and they might also deploy encryption, right, to encrypt the content of the malware and therefore, it becomes more homogeneous in the most sense, right, and for that then if we look at it in terms of image then they might all look quite similar and therefore, you know it's harder for us to figure out whether it's family A or family B. Fascinating. So they know that we're looking out for them; they're trying to - going back to your facial recognition argument - trying to disguise themselves like people who already have authorization to get into the building and that's why your algorithm sometimes has challenges differentiating the allowed security guards and cleaners from the disallowed kind of criminals trying to get in. But it sounds like based on the way that you're approaching the problem, this is something that we can continue to work on; it's not an unsolvable problem - would that be fair? So actually, I think we are actually working together on the next generation whereby we are going to look at additional features. So, currently now, the whole analysis is static

analysis. We are looking at some of the dynamic analysis, yeah. So static analysis basically just means looking at the malware family in and of itself as a binary blob. Dynamic means as it's executing? Yeah, we have tried to execute it in a sandbox to grab more information about how the particular binary behaves and things like that; and then from there, we can build call trees for example, and maybe some other form of images, right, and then we can bring it back to the model and the ML team to actually help us with, like, figuring out how we can use the information to classify the malware better. So this is very exciting. This is an example of how the good guys are using AI to catch the

bad guys. I'm going to talk about the bad guys in a bit but first, I want to find out a little bit more: what other interesting applications have you seen for how the good guys can use AI and ML to defend themselves and protect their organizations? This is just one example, right, malware detection? I think in Labs, we are also looking at some of the other locks; typical security locks such as your network locks and your endpoint locks. So apart from malware analysis, we're also trying to deploy some of the algorithms that we have developed to detect threats on network locks and security locks in order to detect when threats are coming into a particular organization. And why is the AI approach different from the traditional approach of just looking for particular types of signatures? What's better about it? Actually, the very first type of AI is a if-else algorithm, right? In the past, if-else was actually considered as AI. Now, because of signatures, right, we note that the condition is too strict, so that's why people actually delve into AI to loosen the conditions; so what this means is that we actually convert some of these features, right, into a numerical vector. Now, your comparison can now be based on a numerical vector and then it's on a continuous basis. It's no longer a discrete yes or no; is it similar or is it not similar; now, because it's a

vector, you can do things like, Euclidean distance: just calculate the distance between two vectors; cosine similarity, etc. Sounds like another imaging technique that you're using; you're starting to actually map these out physically? So what we normally do, is that for the locks that we see, right? It's actually a time series, so it's a time series of features. So for example, we can look at the bites, the protocol and how they evolve over time, and if there are any of these bite flows or sequences that suddenly match a known malware, then it is something that we are interested in, so that's actually the next track of work we are trying to do, whereby his site will actually detonate some of the malwares and then we will collect some of the network traffics, and then we will actually try to do malware detection based on just network traffic. Fascinating. So you want to see what it looks like after it's detonated and what that- kind of

the ripples afterwards across the network look like and then try to map that; so you can see if you detect that same type of behavior; that same type of pattern in another network, you know that this malware has probably been there as well. Yes, okay. Okay, interesting. So that's another great example of how the blue teams use AI to defend themselves...but how about the red teams? You've been staring at- and you said this a few times: you have to think like the author of the malware to understand how they're operating. How have you seen the authors of malware use AI to do things differently? Are you seeing it? I think those are like, the more advanced ones, right? And for the most part, I think it's not so common, right, such malwares and what we are seeing more commonly, right, is more towards, like, ransomwares and things like, for those they typically - for now - they don't really need ML or AI to help them with it but I can foresee that in the future, right, when their targets grow bigger and all; they want to rely lesser on human effort to actually do the lateral movement within the organization, right, when they deploy their ransomware; they will actually look into solutions or think about ways to actually automate and incorporate machine learning methods to actually do the lateral movement; because if you think about it: if a ransomware group wants to target a particular company, for example, they have a lot of machines to actually look at and try to lock down, right, in the process, and relying on manual effort is actually quite tedious. I believe that will be the future trend with regards to how

ML might come into play in the use of like, malware. Attackers are going to use it as a productivity tool as well. The other thing that I've been reading about is this thing about mutating malware. I use co-pilot, the coding one, to help me just code faster. Do the bad- are the bad guys using things like this as well? Definitely. Definitely. If you think about it, the bad guys are just the lay person,

sitting around in a lab. They don't behave like, you know, maybe one hacker - lone hacker - in the basement anymore; they are probably huge teams of people and they probably have overseas teams as well; you can essentially see them as an organization of sorts. So are they using the same tools that all of us are using in organizations to be more productive and to automate as well? That brings me to another tool that everybody's talking about now - Chat GPT and all of the large language models. All of us are using it to

automate and be more productive at work, but are there risks and threats in using that and are there challenges that security researchers need to think about? For things like Chat GPT, it's actually such a huge model, right? Can be 10 gigs, 20 gigs of ...in terms of size, so definitely there are vulnerabilities within there that we are not aware of but recently, there are actually new ways to actually break these models again. So there is a new wave of techniques that's coming out; it's more sophisticated; it's no longer someone trying to manually create English words to input into the model. Now, people are actually doing things like white box testing or hacking against these LLMs. So there was a paper that was published in July from Carnegie Mellon. They actually showed that by backpropagating the gradients through the LLM, they were actually able to make it swear at the users or even write a death threat despite the safeguards that have already been put in place. So what this means is that- and how they did it was that they actually appended an adversarial string behind your prompt; so your prompt could be something very benign, but once I add this string behind it, right? Straight away it starts scolding you; it starts cursing you, and etc. So this actually brings about a new feel, right, whereby it's

actually a new vulnerability and a new attack vector for attackers. Now we have shown that you can actually create objectionable content. Now, imagine your ChatBot is actually connected to some of the backend databases, and then it can actually start producing information of your backend databases to the attackers without the attacker actually going into your network because it is actually connected to your ChatBot so...because ChatBot produces texts conditioned on what information they have and imagine if they start revealing this information that you have. So there are two scary prospects from that: one is that confidential information can be revealed because the ChatBot gets irritated into revealing information that was not supposed to reveal. As long as it's connected, it should be able to pull that information- that's the first

scary part. The second scary part is actually some of these AI systems are going to be plugging into decision-support systems; they're going to be making decisions on behalf of users at scale. I mean, you mentioned that the attackers have to deal with thousands of computers. Good guys have to deal with thousands of systems as well and sometimes, we'll be using AI to support it. If it's possible to manipulate how the AI works from the outside, you can actually then manipulate the decisions that are being made about whether to turn the water systems on, whether to change the temperature at your home, whether to open all of your doors and locks; so you get into a strange area and I think it sounds like AI is going to be a fascinating area of research for both of you in the next bound but yeah, any thoughts on that? So that is why we actually have to carry out some of this research on these new fields. Increasingly, we note that cyber is no longer like in the past where you're just trying to secure an organization; now we realize that organizations may start using open source models, etc., that actually may present a new attack vector into their their backend databases

so all this needs to be studied. It's a very evolving emerging field. Excellent. Any final thoughts from you, De Sheng? Yeah, I think the same way. The malware research team will actually have to rethink about some of the ways that we do our analysis, right, especially with regards to LLM and things like that...yeah, it'll be quite interesting to see how the entire industry moves forward with the introduction of AI and ML into the conventional malware research kind of field. Excellent. Well, I think it was a fascinating conversation; we kind of dived into this whole

area of leveraging AI and ML from malware research and analysis, and we started by understanding, you know, what malware research is, the challenges you face, what you're trying to do and why you're trying to do it, and then we ended up with this very interesting idea of using imaging almost like facial recognition technology to understand how the malware works, and at the end, we really discussed how the face; the shape of malware is changing now with the introduction of AI, both at a tactical level for how they develop the malware, but also at a strategic level that LLMs itself are a type of technology that could be malware-embedded and understanding what malware looks like in the world of LLMs and in the world of AI, is different from in the traditional world where you have a binary file to deal with, whether it's hostile strings appended to the end of your query to, you know, whether it's understanding the decision-support systems that's driving in the data that's supporting it. Looks like AI is going to be here to stay, and it's going to make a big difference to the way that we do our work. But I want to thank everyone for joining us today, I think it was a fascinating conversation; went all over the place but I think it was a fun chat for myself and hopefully, for you guys as well but thanks, that's all we have for today and thank you so much for joining us. Till our next episode, stay tuned

and thank you so much for joining us

2023-10-15 15:49