Walking on Mars

NASA's. Jet Propulsion Laboratory, presents. The. Von Karman lecture. A series, of talks by scientists. And engineers who, are exploring, our planet, our solar, system. And all, that lies beyond. Hey. Good evening ladies and gentlemen how's. Everyone doing tonight. Good. Well thanks for coming out and joining us in the enjoy the a/c while you can. So. Virtual, and augmented reality, promised. To transport, us to places that we can only imagine when. Joined with spacecraft and robots these technologies, will extend humanity's, presence, to real destinations, that are equally fantastic, NASA's. Operations laboratory at JPL, is spearheading, several ambitious, projects, applying virtual, and augmented reality, to. The challenges, of space exploration. Tonight's. Guests will share their progress so far the, challenges, that lie ahead and, their vision for the future of VR and AR in space exploration, as lead. For the operations, laboratory, at JPL tonight speakers, developing, highly innovative and, effective space, Mission Operations products. With, an emphasis on, human-computer. Interactions. And natural, user interfaces, he. Produced the first applications. On the microsoft, hololens platform. For mars rover, operations, astronaut. Assistants, on the International, Space Station, and spacecraft, mechanical. Design and, through. Key relationships, with industry he, is applying pre-market, technologies, influencing. Future technology, roadmaps, and establishing. A leadership, position for NASA in the application, of virtual and augmented technologies. For, space he. Is also managing industry, partnerships, that are bringing NASA's, mission, of exploration to the public and a stem audiences, worldwide, here. To lead our discussion tonight please welcome tonight's guest mr., Victor Liu. Thanks. Mark. This. Is the, first, image, back for Mars sent. Back from the Mariner 4 spacecraft, more than 50 years ago in 1965. And I know it doesn't look super impressive, by today's standards, but, back then can you imagine it, was the first spacecraft that.

Got, To another planet and the. Scientists, the engineers are so excited, to look. At what the camera. Has captured they. Couldn't wait for the image to come back so they started taking the bits of data and plotting. It on a grid right so this is the result of that grid is a color by numbers thing they color that in they filled it in and this is the first glimpse of the, Red Planet. 10, years later we set foot on Mars for the first time with a Viking one robot. Lander. And you, know it took a little selfie, of its foot and the rocks around it it's, real pretty ever. Since then we've sent over a dozen, successful, missions to Mars whole, mating and the, curiosity. Mission in 2012. Now, when the 2012 when the Curiosity rover landed the, first image, that it took was this one yeah. It's a little bit blurry it's. These are taken, from the hazard, cameras, of the rover so there's, a front image and the back image they, don't look very fancy. But for us on the mission it was a critical moment because. It's the first time we recognized, that the mission had, been successful. It's, safe it was able to communicate with us and send. These images back, so. These three, images, depict. A great story, of how we've, spent, the last 50, 60 years exploring. Mars but. The one problem. Here is we, as humans, don't fundamentally. Explore. This way we'll walk around our world looking at pictures right. This is how we explore, you, know we run around our world we immerse ourselves in, our environments, and we, share those experiences with, those around us and. It's. A wonderful feeling to be able to share that with other people but. For Mars we. Have many challenges, and so today I want to spend some time talking, about those challenges why is it so hard for us to drive a robot, on Mars what, are the challenges and what can we do with, these new technologies, have been hearing about virtual, and augmented reality how, can we take those elements and improve, the way our scientists, explore this foreign planet, and. We'll start with the first challenge, and this is the one we kind of touched upon already we've, got these images these 2d images that's all we have from the rover you've, heard about these fancy cars like Tesla, and the Google self-driving, cars, they got fancy lasers, and radars and all these gizmos we, don't have that all we have are these camera, images, and from, that we. Have to you, know depict, in the entire 3d, environment, for our scientists, so, that's the challenge of the limited types of data the second issue is this. So. Half of you guys in the audience know what that is I. Apologize. That you didn't experience, that for, the rest of you guys this is a 56k. Modem, this, is how we used, to connect to the internet it, would take about five minutes to, do that and then it would take 30 minutes to down your favorite music song. Those. Were tough times. We. Use the Deep Space Network to communicate with our spacecraft and it's not this bad it's actually a pretty strong, pipeline a pretty broad, bandwidth, the challenge, is that each mission only has a small segment of the day that, they have control over that network to, communicate, with their spacecraft, so for Mars for example we. Get a few you, know a chunk, of that that's bandwidth, and so if you spread that out averaged it across a day you, get something like 56. K which, is the challenge right not only can we only take pictures but we can only select a portion. Of those to send back so if they be very careful, but the data we get back here's. The third issue. Some. Of you guys may recognize this as lag, we, in the business call time, delay alright if you're a gamer, 500. Milliseconds, of lag is horrible. And, you. Can't even play a game with that much lag, for. Us on Mars we have a 15, minute time delay one-way, right, so that means it takes 30 minutes before we even understand, if the thing we send it send a command to do actually. Happen so what that means is we can't joystick, the rover here on earth we have to send, it a block of a commands every single day and we only get one shot at that right so we've been very careful about what. We want it to do every single day here's, the last thing that we don't really think about alright this is something, we take for granted and, that's collaboration. Alright, we're doing that right now right we're having this conversation together. And. We. Can do out of earth very easily but it's very hard to do on Mars is, your scientists. They're primarily, geologists. Right, so on, earth what they do is they walk, around on these outcrops, these hills these mountains they study the rocks that pick them up the user tools and they, talk with each other in the same location, when.

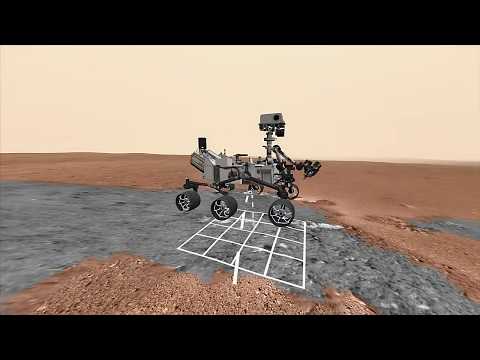

We Tell them to study Mars they don't have any of those capabilities anymore, they're. Behind. A computer screen that, are usually distributed all, around the world and they, won't get to talk to each other over digital. Means so. We took a look at this challenge right of this, is the data set we get back how. Can we take this and transform. The way they do their everyday jobs and our, team at JPL we. Took that problem, and we made this. This. Is what Mars looks like today, every. Pixel, in that image in this video you see here is real there's no computer, graphics, generated, it's all automated, pipeline, we're taking our 2d images, and building, this 3d world that, our scientists, get to explore it's it's the best rendering, of, Mars that we have today, and I could go on and on about how we're doing this so I'll just go quickly through it. All. We're doing is we're taking these 2d images we're building a 3d model, we're applying the colored textures on top of it chopping, into these 3d. 3d. Squares or tiles, and then streaming, it into mobile devices, so we have an experience that works on the phone we have experience, that works on the web and one experience, that works on these AR devices. These augmented, reality devices, but, what that really means is, for the first time our, scientists, get to get up from their desks and walk. Around on Mars and. They get to do that together with their colleagues, all around the world you, really get to explore, Mars in the, way that they, explore, earth all right thank you we're giving them their natural capabilities, back and that's super powerful for them but. We didn't want to stop here, right our scientists, get, really excited about using this but what about everybody else and so. We partner with our friends at Google and. We build this experience, called access Mars now, access Mars uses a technology, called web VR which means it works on any device with a browser so. It works on your phone your browser and your, VR headset, let's, take a quick look. On. November, 26, 2011. NASA. And JPL launched. The Curiosity, rover on. Its mission to find out if Mars has ever been suitable, for life. Since. Then it's, taken over 200,000. Photographs, used. By NASA JPL scientists. To create the most realistic 3d, model of Mars ever seen. Today. It's yours to explore, on. A computer. Phone. Or in VR the. Real surface, of Mars, photographed. By the Curiosity, rover now. In your browser. So. That's life today a Texas, Mars calm, and I encourage you to go try it out later we're. Gonna update it over time as Ciotti curiosity. Continued its journey on Mars. So. Before. We move on, I would like to actually show you a little bit about how, this is being used by science, to scientists. Today and I. Can't, get, the headset out and make made you guys all experienced and all commander reality so I'll do the next best thing and show. It to you on the web so. This is the tool that our, scientists, use on, the regular to explore, this 3d Mars.

Experience, And you'll, see initially. It looks very much like the images that showed you right but. What we can do is. Back. Out of this image and, you'll. See that, were actually in a 3d environment with. The rover and just. Like the video shows you earlier, everything. Is here is real, not. Computer-generated, and. It's running in real time in your browser so what's cool is we can click on any of those rocks that, we care about it, shows, you a list of images, here on the left there was either raw images, that were taken by the rover and when you click on them it changes them to the perspective of which that image was taken and, you'll see that it almost lines up perfectly, with the 3d. Environment. So, it really gives the the, the, scientists, that the credibility the confidence, to then go operate, the rover on, Mars, so. This is the basic, functionality. For for how we're changing the, way that people, are operating there over on Mars but, this. Technology, is not just limited to Mars right if we can do this for Mars we can really do it for any remote. Environment. And so we've already started to do that and today I want to show you guys a sneak peak. Of another, product that we're working on and this is in conjunction with the Japanese, Space Agency so. This is a mission called Hayabusa, 2 it's a very ambitious, mission, it's, going. To rendezvous at this asteroid, called ryugu. Survey. It for two months take. Three soil samples, and land, for Rovers, on it and then it's going to deliver those samples back to earth all. In a matter of two years and so it's a very challenging mission, and we really want to help the sciences in that short amount of time get the most science, out of, the, time they have so I'm going to show you a preview of what. We can do with that data set but, we don't have that new data set yet so I'm going to show you what we did with the previous data set which is from there Hayabusa one mission this is it okawa right. So. Initially here you can see this ring. Around. This asteroid, and these are actually each one of these squares it's an image of, the, asteroid if I click on one of these images on the bottom, as you can see it kind of highlights. It. Takes you to that image overlaid, on that asteroid so you get a again, this representation, of, the, raw image, on top of this 3d environment, give you a little bit of that context. The spatial awareness. So. Where we going next with this stuff right, what's what's next for Mars exploration, well, the next thing we have is the. 2020. Rover there's a row there's another rover we're launching in 2020, and on, that rover there's something new, there's. Going to be a helicopter, it's. Gonna be the first. Interplanetary. Aerial. Vehicle, right super exciting. It's a 2 meter blade, one. Kilogram payload. We're. Just super excited, about what, this new capability brings. To our sensor. Package. You, know we were able to get these 2d images, we're able to send, them down but what what if now we can get video what, if we get the third-person view what, if we really map it and walk. Around and fly around the the way we do here. On earth so, we're super excited about this mission but. Even further out we're. Gonna send humans to Mars and. You. Know whether. We do it or China. Does it or SpaceX, does it we're, gonna do it someday soon and when. We do that you know it's going to be an a really exciting time it's gonna be a global, event just, like the World Cup, everyone's. Going to be tuned in but. Instead. Of watching it on their TVs this time instead. Of just the scientists, and the engineers and. The astronauts that get to go to Mars we. Will all be there already. Virtually. Exploring, Mars and welcoming. The astronauts, as they, take the first steps on the, future of Mars, exploration.

Thank. You. Before. I open it up to, general. QA I'd like to welcome a few my colleagues up on this stage these are the experts that worked on these technologies, and these missions to. Answer a few questions, we're gonna have a little discussion so. Please come on the stage. So. We have Alice winter who's, our user. Researcher. On this walk on Mars experience, we've, got Parker Abercrombie, who's a project, lead on the walk on Mars experience, and Abigail, Freeman an avid, user of our, tool as well as a scientist, on a curiosity mission, so. Since. The, topic. Of the lecture today is, walking. On Mars let's start with walking on Mars Abby, how did it feel to walk on Mars for the first time oh it. Was it was almost surreal, so I'm, a scientist, on the curiosity. Mission team and I spend hours. And hours looking. At these two-dimensional pictures. And I spend so much time I'm almost imagining. I'm there but, the first time I put on the headset I really. Did feel like I was standing in a place that I already knew but I'd never actually gotten, to visit it was this really awesome magical. Feeling and then, once I got over that I was really excited and I you. Know just wanted to run around I wanted to run to the top of the highest hill in the scene I wanted. To run along the Traverse for kilometres it was really a great experience and. It's been really helpful to to do science, as a geologist it's, important, to be able to see the rocks that I'm looking at in three dimensions, it provides us important, information about, the, environments. That in place the rocks and the processes. That shape them over time so. Again being, able to now see them in the 3d environment stuffs. Just starts kind of clicking and you go hi I understand, the relationship, between this rock and this rock and now now, I can see, it immediately, by being there that's. Great. Yeah. It was incredible it. Was so, I first, tried on site just after I joined JPL, four years ago and. It was an amazing experience to to, really feel like I was standing on Mars and to see Mars in a way that I had never seen it for and, I was struck, by how how, alien, or different, it looks but. Also how similar, and then, a couple, weeks after I first tried on site I went camping that in the desert, anza-borrego and. I was struck by how how similar Suns things looked to areas on Mars and I realized that I had started thinking about Mars as more of a place than. This this abstract, dot in the sky or this this place where the images come back it really felt like like, somewhere I had been at least virtually. Alice. Your title. As a user researcher. For, the audience here can you tell us what that means and what do you do, for walking on Mars yeah. So my role can be described, with different.

Titles So some people call it a product owner some, people call it a user experience researcher. But. The core task is really to. Represent. User interests, and development decisions and, to do that you've got to know your users you got to get out there you got to meet them you got to know their jobs you. Know learn, a bit of what their the problems they're having see, what you can do to solve that and. Then synthesize that and bring that back to the development team because they're working on really hard problems like how do we take all these images, and reconstruct, it into this 3d model and. I'm. Bringing the, geology. Perspective, to them that I've learned from people like avi or some. Of the challenges. They've heard from the scientist and how are you able to improve you, know the overall experience based on these interactions, yeah, so one really, three dimensional problem is where, has the Rover been before like, I'm standing in this environment I see, the rover right there but at some point it was moving right where did it come from and, there's a lot of ways you can display this depending, on what you need to see like do you need to see where. It's been since the beginning of the mission or maybe where it was yesterday so you got a so, I went out there and I talked to people I'm like oh why do you want to know that you know why. Or, what fidelity, does that direction need to be does it need to be a video of the rover driving, or maybe could we show arrows, what does it help. You do and it helps them get a perspective of where, we're going on the next day or perhaps where, we've been before so we can see, what, data we have collected and maybe, data we're going to collect. And. Parker, you, know for. The developers, out, there, how. Is it building, these experiences, what are some of the challenges of building in augmented reality maybe. Tell us a little bit what that means absolutely. Well, on the one hand I think it's it's easier just to build these sorts of experiences than it ever has been which is great gaming, and entertainment, industries, are generating a lot of this technology the, headsets are becoming more capable, and more readily available and, more affordable and the development tools are continuing to improve but. There's there's still a lot of challenges because the medium is so new the. Main ones that come to my mind are the technical. Challenges, because. The headsets. Especially, the the fully mobile had headsets. Are not. As capable. As a desktop, workstation or a traditional gaming PC, so. Getting the the software to run formally, on the headsets can be challenging. There, are user interface, and perception, challenges, because the immersive, interfaces, are so different there's. Not a lot of examples to look to like, even the car even simple things like what should menu look like or how should the user interact, with the system are, are. Not not. Totally solved problems, so you have you there can look at a few examples of how other people have done it but there's there's something our clear answer and making, that usable. And learn about for new users can be challenging. And. There can be a human. Comfort, issues. With what the head-mounted display, if you if you don't pay attention to the user experience you can make people feel motion sick which, i think is kind of the worst the. Worst experience, a user can have using your software, and. Finally. I think one, of the challenges of, immersion, is that. It's it's really cool they're really easy to put, together a cool demo they'll really get people excited but. It's much harder to build a tool in, an immersive tool that people will really pick up and use as part of their day to day day jobs and provide, enough value that, a user is willing to put to put on the headset each day and one. Of the big challenge is developing on site has been making the the software is streamlined, enough that it's not just can effectively, fit into their workflow and that, it's a it's another tool that we're giving them that that's actually adding to their to. Their capabilities, and it's, not something that's a little too cumbersome, to to actually is on the job. Thanks. For her so if just to add to that I think the work that Alice does is is really key to understanding, the users pain points, what's. Working out for them and what needs to be improved so, that we can make the software as streamline as possible so it at, the end of the day it solves our users problems. And. As one of our favorite, users Abbie how, has, how do you think this these kind of tools have changed the way you guys think about science planning as a whole yeah, you know a couple ways one. Of my favorite experiences, when, kind of on site was first coming on we.

Were As a day I'm planning and we wanted to image a certain feature and, we were wondering, okay should we take it here should we wait till we drive well we have a better view and, you, know we have tools we can put it in we can calculate you sheds that's so cumbersome, but I said wait a minute why don't I just look, and I could put on the headset and I could look around and say okay this looks pretty good and then I ran over to where we were driving and I said oh this. Looks much better so. I mean I could tell and kind of intuitively, we should wait we should wait to take this picture we'll get a better image after our drive and so, in that sense it's really helpful for planning, it's. Also helpful for planning and helping us understand, the, safety of our drives. Some. Of the things that we do scientists, is work with the rover drivers to understand, is this terrain, gonna be treacherous, are these rocks gonna be a problem so. Again being, able to see that in 3d and even walk the Traverse, before the rover even drives the Traverse is so helpful in just getting this intuitive, sense of what's gonna happen yeah. Tell. Us a little bit more about that planning cycle how do you work with your colleagues around, the world and make, sure you come to the same consensus, in such a limited amount of time yeah so usually. The way we operate the rover is we play, on a day at a time and we'll, plan a day we'll send the instructions to the rover it will run it stuff while we sleep at night and then we'll come in the next morning and we'll have all of the data that we collected that day before and we have a very, small, window of time where. We need to make the plan for the next day before. We hit our deadline when, we need to uplink it to the rover so we're compressed and we're also located, around the world we have the science team around the world so we all have to come to an agreement about what we want to do in this compressed period of time so. Again being able to visualize. The data, we intuitively, have, tools that we can just fire up right away is so important, and helping, us all get on the same page and, really understand, what's going on and come to consensus, and enough, time that we can make a plan. Parker. We've, been working on this stuff for four, years now and. What. Do you think the next four years looks, like for this kind of work. Well. One of the the immediate things on our roadmap is building the next generation of this tool for the upcoming Mars 2020 mission where we'll be taking all of the experience, of the previous four years extending. It and making, it even more integrated, into the the science planning process where as on-site came in to the Curiosity, rover mission, kind of late in the game as an additional, experimental, tool our our, next tool called Astro, will be a fundamental. Part of the tactical process used, by the science team, but. But thinking beyond Mars really. What on-site provides is, immersion. And situational, awareness of, a remote environment maybe. With a robotic vehicle or maybe not and Mars, is kind of an extreme example of that if a place that you can't physically visit but this will allows you to virtually visit but, there's a lot of other applications, that, could benefit from the same capabilities. Including. Exploring, the seafloor here on earth, exploring. A lunar, surfaces, or asteroid surfaces, the, the upcoming Europa clipper. Mission, or, even exploring the areas. On earth that are just difficult to get to like volcano craters lava, tubes areas. In the Arctic or. A remote field you know geology field sites we've had a lot of interest from from, terrestrial geologists. In that kind of capability. Alright. I just have one final question for you guys before I open up the public, with. These types of virtual augmented, reality technologies. We're hearing about everywhere. What. Other things, in everyday life do you think it's gonna change I'll. Start with you else. Hmm. Well. I think it really has the power to connect people to. Places. Or people, where. We are you know not physically, co-located. So we've seen some, I. Mean. I've heard of some experiences, in the news where people can, go to a museum, or they can go to, another you, know place or visit people in a, way that you, know they couldn't do because they were physically. Separated. Mars, is the furthest place Mars, is the furthest place definitely but like Parker said I feel like education. Is going to be a big big. Beneficiary. Of these kind of technologies there. You, know and you. Could be in a classroom learning, about you, know the ocean floor but then you could all dawn your headset and then go walk around and do like these virtual, geology. Field trips while you're still in grad school and I think an, education, is probably going to be the biggest, connecting. The students to those remote environments, that they're studying is.

Gonna Be the biggest I. Hope. That's the biggest change. Parker. What about you what other applications do, you see I, think that the these capabilities, are going to become more more tightly integrated with tools that we already use and extensions. Of tools we already use I think that will come to a point where the, the headset is not, a completely, separate application, but it's it's another view of your, computer, and the applications, you normally use on your computer throughout, your day will, have augmented, reality or, virtual reality, you know views, what it makes sense to have that that fully immersive, stereo 3d and capability, and I think will be a very normal streamlined, part of working, with computers in the future and maybe. Maybe even beyond that when you're not at a computer when once the the, the, display. Technology, is portable enough that you can carry with you everyday it will be as. Ubiquitous as, ubiquitous as a smartphone. I. Mean. Yeah. I think my answer is actually very similar to Alice's, but I'll say it anyway you know one of my favorite. Aspects. Of using on-site for Mars rover operations, is the fact that as a science team it makes it so easy to collaborate so one of the features of the software is that we, can actually all beam onto the surface of Mars together and we have these little avatars, that show up and walk around and, we're all connected through audio as well and it's. Amazing, how much you really start to feel like you're actually in, a field site with these people who. Might be in Denmark. Or Great Britain or Australia. But, you really, feel like you're in a room you're walking around you're avatar can interact with their avatar and I. Think, just the, capability, for this to bring people, together you know if they're just families, and loved ones across the globe if there are different, researchers, who are interested, in a common goal any sorts of thing it. Just you, feel so much more together than you do over. A WebEx, telecon, or something like that it's really, interesting to me yeah. I think overall is gonna transform. The way we capture. And tell stories right. Right now we capture, them with these little things and. It's. A very linear timeline, right. A bit imagine, an environment where, you. Know it's oh it's a concert, or an, app but the World Cup or like a football game and you, can replay that from any angle any time right and walk through the field as they're playing these. Type of technologies, are we're gonna really enable, and open up a whole, several. Different dimensions of, interactions.

And It's super, exciting to see what comes next all. Right so what's that we'll open it up to questions, here. I think, if you guys can line up to the mic so that we can capture that. That. Would be awesome. There's. A mic in the middle. If. You can't get, to it feel free to shout out and I can always. Am. I first yes, excellent. Okay my question is not so much about virtual, reality but augmented, reality so. I understand, that the heads-up display in aircraft especially for fighter pilots has been really revolutionary, in terms of, extending. Human capabilities. And a partnership with the machine do, you envision having, astronaut. Spacesuit, helmets having full functionality, like that and being able to overlay. Instructions. And navigational, information things, like that is there in the field and, also safety like their oxygen level or something so it, can let them know if there's a problem very quickly. Yeah. We have technologists, at Johnson Space Center in Houston working, on that problem right now the, one thing to think about it's. A unique challenge, both. For fighter, jet pilots and astronauts, is that, you can't have a screen, that's, the only rule because if the screen breaks you know what, happens right it's a huge safety race so, to figure out how to get the projections, on the screen in a way that it feels like it's the right depth q but. Also doesn't obscure, their everyday tasks that's, an integer challenge we're just starting that it's a it, makes perfect sense thank. You. All. Right thank you you've, spoken, a lot about how, these, technologies can, help people feel more connected with places, they haven't been. How. Do you think this could apply to. Also. Helping, people connect with places. They haven't been through, things. Like robots, on earth, because. For example here you're showing a lot of visualization of, remote environments, and. Particularly. With your, delay, in communicating, with Mars you, can't exactly joystick.

Robot, As you said but, there are a lot of places on earth where you could actually start to interact back the other direction again with the world so have you started to think about that yet and what, might that look like thanks, yeah. This, actually ties, in nicely with the previous gentleman's question of how. Can I can, augmented, reality and. Play into these sorts of tools so. In, the context, of. Visualizing. An environment that a Rovers navigating, through the Curiosity rover is going to one extreme where we have a large, time delay but, there are other cases especially, terrestrially. You might have rovers there in the same field site as you so. There we we see, being. Able to overlay. Into the real world information. About the where the vehicle is if you can't see it for example if it if you're in an ice field and the vehicles, under the water or under the ice and, visualize. Sensor feeds showing, you what the robot is sensing. You know as if you were seeing kind of you, know visualizations, on top of the rover in the field and helping, you understand, what the Machine is sensing. In a way that that you're you're, not really able to in the same way with from a screen, and. How I extend, to control, I. Think. That that could that. Could be used by an operator, controlling, the robot giving them a view into the into, the vehicle and it's its. Surroundings. And its interaction with the surroundings, in a way that we don't have with with current interfaces, thank. You. Hi. Um I, noticed. Or, I heard, earlier you were mentioning about the motion, sickness palm with a VR and AR which is definitely very prevalent and kind. Of prevented, the. Use of Xbox controllers, and joysticks nowadays for moving around and I. Was just curious if. Specific. To your projects, you, have come up have you come up with any innovative. Ways that we could minimize motion, sickness such as ways that we can move, around the environment, in. I, guess that, we haven't heard of before I think. Right now we're really in a discovery, phase because, you know the web has been around for a long time so there are standards, for developing, you, know stuff and no nothing's. Gonna make people, motion, sick on the web but there are certain things that we know confuse, people so. I think we're now in a stage where the whole industry. Is discovering, like what are the standards for, UX. Design and VR so. We found a few things in our application. That we know cause motion sickness so we don't display, them or you, know we'll have a feature in our web viewer but not in our hololens viewer because we know like if you control, the camera angle. On a halt like on the web you, can change the camera however you want that's why we can all watch movies, without getting motion, sick but if you're watching a movie in your VR device your. Body knows it's not moving so when your visual feedback tells, you that it's you're moving that, can cause motion sickness and on top of that there's a tremendous, amount of individual, variability so I think we're just discovering those standards, on, our own and sort, of sharing them with other people. Who, are discovering VR applications, we're always interested, to learn what people in the gaming industry have, found makes. Their users motion sick or not. Thoughts. To that I think, Alex Ellis hit on the big one don't don't move the camera if the users heads not moving that's sort of that, could see most of the way there. Don't. Do things that you can't do in the real world I think, especially things that make your brain feel. Will feel strange like flying can be problematic not, having something at the ground level to feel, like you're you're floating in space can be problematic I'll. Give you one that's kind of unique, to the to the Mars case as you're, walking virtually, on Mars you're, on a flat in, a flat room but Mars is not flat so we're continually, having to adjust, the height of the terrain to make it feel like you're standing on the surface we.

Found That as you're walking uphill if, you continually, bring the world down. That, makes people feel motion sick so, we actually let you walk a little bit into the hill and then we bring the world up when you stop but. Interestingly when, you walk downhill it's, fine to bring the world up continually, so. It's really just been kind of a process of trial and error of trying, things out and then when one doesn't feel right trying to tweak it until it feels okay and it's, very non-intuitive, sometimes. What what those things are that that, cause problems yeah. One of the things, I will add that Parker touched on is the. Flying, thing because, a lot of people want to look at the the. Terrain from an orbital view to get a bigger picture but we found that when we did that you're, it's too high above the terrain and it makes people feel motion. Sickness or an unsteady, so, we actually put a floor, like, a fake floor, under, there where, it appears your feet are and then people were fine and, we. Just discovered, that that. Works so. Those are the kind of things we're just testing, out every time we develop new features that's. Really cool thank you. Hi. On. In reality, what role did the impact, craters, play in, making. An even terrain. Whereby. Are, there adjustments. That have to be made. We, know we don't make adjustments. For the impact craters what we'd want to do is capture the craters as as, accurately, as we're able to so we take the images that the river sends back and from stereo correlation, of the rover imagery we can drive, the 3d geometry in this case the the geometry, of the impact craters and then, we'll build, that into our integrated mesh reconstruction, in fact, some of our users are specifically looking for the impact crater so it's an area of interest for them. They're. Nature's drills, man they drill in and they expose, the 3d stratigraphy of, the rocks so what a great rate of you it in 3d. With. The MRO. Constantly. Discovering. Impact, craters. Say. For example in 2010, and then most recently in 2012-2013. There's. A frequency. Of the, impact, craters, to, allow. To substantiate. The. Even. Ground or to compensate for it. So. Mars is pretty heavily cratered but. I mean as you can see in the images it's it's a big planet so there's a lot of craters but there's even more ground to drive around on. Thank. You. Hi. I. Understand. The concept where you have the helicopter, you. Know in the future you're going to have the helicopter could, go up but. What if the helicopter is, sort of a mother ship and has four or five little. Drones on it and they. Could go low or high and they're, going in five different directions. Without a very expensive hardware, and, just. You since we, have so much technology now. Sorted. To split out and go in different directions, you. May have, saved. A lot of time one. Day going up here and one day yeah. Just. Running by you yeah. That's an excellent comment, and actually something that we've been talking about here at JPL quite, a lot you know this first helicopter is gonna be a great technology, demonstration. Of how it will work and now, we're starting a thing beyond Wow you know if this works what else can we do and, ideas, of multiple helicopter, there's a single site to explore, vast. Areas, is something we're really interested, in considering. Hi. I was just curious if you've been approached by any filmmakers, you, know instead of sitting in the future in a theater looking. At a screen people, could sit with their VR headset, anywhere and be part of the film, with. A lot of the studios here you know Fox. Disney. They. Have some of those innovative, solutions, like you mentioned you know kind of a green, screen before, you get to do it right what if you could be on Mars and act there and then actually do your action, action what's. Really interesting I think is and then relates to the previous question is how. Entertainment. You. Know excites. Things. That we do here and and. And and back and forth and and so, it's this you know the. Movie studios the entertainment, they create these crazy. Visions, of the future and, then we built them right. And then when we build them that gets excited and I think about a future, future and then it just keeps feeding into the loop so I think it is very exciting for us we.

Do Talk a lot with these these studios. Not only about the technology, but, about what that allows us to create excellent. Thank you. Do. You have any plans to open source these core components, of your technology. We. Don't have the immediate, plans to open source the on-site technology, however we are working on open sourcing the the terrain rendering engine that we've built to render, the the Mars terrain that's. Not quite ready yet I've, checked. Back with with that the ops lab page later, on it, should be coming online probably. In the next half year or so okay. So the information will be on the website and then we'll be able to check it out so that one side op slam to JPL that nasa don't go okay yeah thanks. You. Mentioned briefly that I, like especially as a scientist, that the whole, environment feels, very much like a field like. II going on into the field he's going on Mars and. So it's kind of curious into. If, you, see, any future, improvements. In terms I feel like there's gonna be some sort of disconnect. Between obviously, you can make it direct observation. On. The material but I, mean. Do you have direct access to maybe. Your, data on you, know its composition or. Obviously. You can't like actually engage with, the material, to see like maybe how brittle it is and, on top of that have. You guys actually dealt with the issue in terms of, taking. Field notes is. That you going in and out of the, VR which I feel it might be a little disorienting. Or can you actually take field notes while, being in the environment, yeah. So those are great questions and something that we worked closely with Alice actually when we were developing it how much data should, we show should be be able to click on a rock and have a composition, come, up and what. We found was sometimes too much just too much and. It just overwhelmed. The interface I'm sure you can talk more about that but in, the end we do we have multiple tools so we can use, the on-site to orient ourselves give, the basic lay of the land give, the basic geology and, then when we need to go deeper into you, know what is the composition of this particular, rock we can then go to our computer screen and look at those data, in. That medium, yeah. We have a lot of the capabilities, to display that data but it's just what is useful in, the moment so, what we found is that people, kind. Of just want to walk around on Mars you get just so much information by, walking. Around as because the geologists, who are walking around on Mars in our in on-site trained, to be geologists, on earth where they were used to collecting, data you, know spatially. By walking, around so, we found that that's the most useful thing, for them but, to your points I know that one of our users he. Would. Look. He would Don the headset and then look around and then go to the highest hill and start drawing maps you. Know because you could see, the. Whole terrain so, that was his version of field notes as you say so, there are ways to do it it's just you. Know using it as a window into another world and then, you. Know whatever's useful to you in this case maps we're. Always thinking. When, we first started this project we drew, out these storyboards much, like in film and the. Last storyboard, we had was. Two, scientists, picking up a rock, together with. Their hands and studying. It feeling, it getting some statistics, out of it and putting, in their virtual pouch right. And the. Technology, today is not, quite there yet but. We do see a roadmap for that to be possible now. Imagine you you see this rock you really love Abby picks it up she. Studies it and then. Weeks. Months, down the line you. Feel deja vu yeah I saw that before right and and and with machine learning I these new technologies, it, recognizes, every past rock that you studied, or have, not studied and recommends. You know just like Amazon does and recommends. Other rocks you might be interested in right. It. Makes perfect sense right we do it every day today why not for, Mars, so.

Yes We're, thinking about all sorts of cool technologies, but. Relating. It back to the user and what's most effective in their use case. One. More point here your question you mentioned that might be disorienting. To pop in and out of the virtual environment, the. Hololens is is not a completely, vr device so you have some peripheral vision leftover. Which allows you to take notes on pen and paper fairly, effectively, and we it, originally, played with some ideas, to make that even more, integrated, with your desktop tools where, we would recognize, where your computer monitor was in the world and cut that out of mars so as he looked around you would see Mars everywhere, and then your computer screen on Mars. And, that's that's something that I would like to pursue, in the future to make these, emergent immersive, tools more tightly, connected with the tools the scientists or nearly use as, we found that some things work really well in immersive environments. Like looking at 3d, data from multiple perspectives, and, other things actually don't work all that well like looking at bar graphs or text, that works, pretty well on a 2d screen and putting a 2d screen into, an immersive displays, is kind, of missing the point so. I'd like to see all of these tools working seamlessly, together so you can use the immersion to get the context, you know click click on a rock see that rock pop up in all of your other science tools we. Dial, it in a little bit and see point change in the immersion and again seamlessly, go back and forth as really as if you're just using one tool that's, part of mersive in part 2d. Thank. You. Hello. So, currently you're using the tool with existing, data sets which are images, which is also pretty cool because presumably you could integrate, that with all previously, existing, data sets as well but. None of the technologies, developed, and shown I'm wondering if you have considered, adding additional sensors. Like maybe temperature. Or perhaps, ambient. Noise and. Creating. Like a more full experience. There to, capture more of the senses. Yeah. So in the case of the of Mars, exploration word we are limited to the sensors already onboard the vehicles. We. Focus mainly on the visual imaging that there are scientific. Data sets they're collected, too we. We have looked at a few ways that we could bring those also into the immersive. Environment, and the, cases where it makes sense is where the data is going to inherently, 3d, actually. One that is, kind of interesting their word work considering, for 2020, is there's an instrument called rim facts that's the ground-penetrating radar that's, going to sense beneath the surface of Mars, has the rover drives and, we've thought a little bit about how could we visualize. Subsurface. In. For meetings. In an immersive environment and would there be additional information that. Scientists, could derive from looking at it in that way. Hi, you, guys put a lot of emphasis on, when.

Building Your environment, that you use the pictures from curiosity. And, no computer generation. So, my question is how, do. You tell, curiosity. Where. To take pictures so that it doesn't most efficiently, since, I doubt taking a picture of every inch of Mars would be very efficient, but, then also be able to combine, all those pictures into, the 3d environment without, any loss of data in certain, areas, where, there's, just not. Enough to, make a full, picture. That's. A great question how about all I'll address that from the technical, side and then having maybe you can add some some, science insights. So. The. Way that we approach 3d, reconstruction, is to take the images that the rover sends back use. The stereo correlation between the pictures to derive 3d. Geometry. And then. Kind. Of a big research area for us has been finding very accurate solves. Between positions. Where the rover captured, imagery so that we can combine imagery, from different points of view and get a more complete view of the environment we. Do that with with a variety, of computer vision techniques that we've tuned, for, this particular use case and there. Are a number of off-the-shelf, tools that can do similar things but. We found that they don't work particularly well for the context, of Mars reconstruction. Because. If, you're doing this on earth you you can capture a lot of images you can throw fly a drone around and capture a bunch of tons of images or you can walk around with your SLR, and capture as much data as you need but. When we're trying to reconstruct Mars we're limited to the images that came back over that 56k. Modem connection. And. They tend to be fewer, in number than you would typically have on earth they, tend to be captured from farther, apart and, with less overlap so, a lot of the the custom, work that's gone into this reconstruction. Pipeline, has been developing, heuristics, that can can. Make. Useful reconstructions. Even when you have limited data and will, use as much or little tool data as we have so, when. We just arrive at a new place we may we may only have two, pictures of the ground in front of the rover and everything, else will just fill in from adorable base map and then we'll add more as the rover down links more imagery so we tried to eke out as much. As we can from the image that we have. Yeah. And then to answer the question of how do we figure out what pictures, to take with the rover that's something that the science team gets together kind, of first thing in the morning and we all fight. It out to figure out given the amount of time we have every day and what our data volume for that day is what, is reasonable, to take pictures of so we, often start, with lower resolution, grayscale. Images that cover wide areas, and then, we kind of use our geology, eyes and say that rock looks particularly, interesting, or that relationship. We need to image that and so, we pick little postage stamps that we want in high resolution color images, some, spaces, that are really special or if we've been driving for a while we'll take a 360. Degree color panorama, but, those are very large data products they take a really long time so. We don't take them all the time but we often do have these grayscale. 360-degree. Coverage for, most of our spots. Hi. One. And a half year ago at, Kennedy Space Center I had. The opportunity to, use the hololens, in. One Mars environment. Hosted. By John Glenn is. It the same technology. With, not what is the difference. Yeah. So the experience, that you're referring to is called destination Mars and that was one of our first public, outreach spin-offs, of on site so. We took the the core technology, behind on site we, took, out this the science planning features and we replaced them with a wrapper that turned it into a museum experience, led, by a holographic. Capture, of Buzz Aldrin and, ERISA Hines one of our Rover drivers here at JPL and you go on a guided tour of three. Sites on Mars and learn about the. Current Rover missions the history of the planet and future human exploration and, I, think what's, really cool about destination, Mars and access, Mars the fiction picture mentioned earlier is that it's using all of the same terrain, data products that our scientists here at JPL get to see so I think it's a great way of letting, the public kind of experience that in the same way that our scientists do yes.

Thank. You try, to hear it again. I'm, sure you probably thought about this before but. We're all under budget, constraints. Companies, individuals. Corporations. Even, agencies. The. Same way pharmaceuticals. To, find out one more thing to cure one little ailment, they may spend a hundred million dollars, on it is it, possible, and if you haven't considered before that. JPL, go to the different two, company, will Disney, the media, companies, and, offer. A. Sell like, put, up the big billboard, whatever it is one hundred ten million here hundred, million there it's not setting up to money I'm, sure it's something is thought about seven, money spent on a stadium, or an, advertising, it'll. Really be a big hit if somehow, you get media, or. Some corporation, to sponsor, or even, to concentrate. And working, on some. Type of software or stuff, instead, of from your part your budget. Let. It be expanded. And so. It's not me let, them get all the credit, whatever it is but. It's the. Benefit of all to us our, commercial. Partnerships. Office works. With companies, all around the country, in the world to figure. Out how, to best, leverage our technology, for their individual, fields so, we do have a lot of interaction with the commercial. Industry. Not only leveraging, their technology, for our applications, but, then sharing back our work if. It helps them do their work more effectively. Access. Mars, experience. That Victor mentioned earlier was the collaboration, with Google, and. The destination, Mars. Or excuse me the. Oh. Actually on site itself, I meant today was. At first a collaboration, with Microsoft so we are interested in leveraging commercial, partnerships, where we can. This. Question is for I think a B. What. Fraction, of your day do you spend using, the on-site tool and do. You see that fraction, increasing, over time and if, so what features, would be, like. Most useful I guess it. Really depends on the day and what I'm doing on days when I'm on shift, usually. I use it for 10 or 15 minutes you know walk around orient. Myself figure, out what's going on there are some I use it for longer especially. Some days we do collaborative, sessions, where we call them meet on Mars where, we spend an hour and we have team members from all over the world you know dialing our avatars, come in and we talk about it's our science discussion, for the day on Mars. Those, are really fun and you know it's fun it's collaborative and you you, work as a team and you you, bond kind of in the field though there's Jon over on the hill over there off on his own and we're over looking at this rock over here so yeah. Depends on the day but I, don't. Know if I see it increasing, a whole lot in the future I, think. The way it's been working has been been really good but I'm excited, to see how it turns out with the next Rover because in that case the tool will have been integrated with the rover when. The science team gets brought on initially, so, they might kind of view it in a whole different way so looking. Forward to how that's used yeah, and one. Point I wanted to mention is that I, I. Don't really think our, scientists need to use it more I guess because I think the beautiful, thing about is that they can put it on they can look around and go oh I get I get it now I see where we are I don't need to spend. You know thirty, minutes looking at pictures on my computer screen to get oriented about, where, the rover is right now or where we're gonna go I really, like that it's a quick tool. That, quickly gives you an intuitive understanding of where you are so it might not ever increase it might stay at ten minutes a day and maybe that's where it's supposed to be yeah, I think it's likely that it also be kind of many, short short, sessions throughout a day as you're switching between different tools you're using this to to get your, initial context.

When, You're going to another tool coming back to the immersive, context, later on and throughout your day so, I'd like to see a tool. That becomes something that's that's, effortless, to switch over to whenever it's helpful to you yeah I sometimes, when there's a break in the day it's kind of nice to just put it on and be like man my. Job is really cool, this. Is really cool. Thank. You. Are. You thinking about creating. An avatar machine. Where. You actually go, there. That's. Right. You. Think it's impossible. Leave, this to the software developers. You. Actually, could smell, taste, and feel and. Actually be there with. That kind of technology yeah. I think I would ever thought of that this, taking, pictures is not getting you anywhere it's, definitely something we thought about I think this is this is getting back to an earlier, gentleman's, question of have we thought of it of incorporating. Other sensors, data, like you, know sound in this case smell. You. Know again we're limited to the data that we get back from the rover so. Smell, I think. We might be a couple Rover, missions away from from. That one. Sounds. Yeah. Next does have a microphone so maybe we can bring in sound. So. I think yeah. You're probably probably, not temperature Mars Mars is a little too cold. We. Tried to we try to find the kind of the happy medium between feeling, like you're on Mars but not feeling too much like you're on Mars. Well. I think it's, possible. It's. Very possible look what we look, what we've gone already, look, what we've done I. Think. We ought to start thinking like this okay. Thank, you thank you. Hi. I think my question is, to Abby is I, understand, that you're saying that each mission I say the karati, have a certain, amount of like a window that you can talk to and get datas, so. I'm just wondering because, it has something to do with the. Direction that plan is facing, where the vehicle, is as. Far as all the other vehicles, that is you. Know in in the space so. There. Are efforts being, to like increase, the bandwidth, so you can talk to like a multiple. Emissions. Simultaneously. Of oh you. Can only talk to one mission, at a time with, the technology that we have right now yeah. That's an excellent question and. So. One of the biggest limiting factors, on communicating, with the overs on the surface of Mars is you. Know one pointing, we can't talk to the rover if we're on the other side of the planet or it's more importantly on the other side of the planet as Earth but, we almost always, talk to the rover's via the orbiters, that we have orbiting, Mars it's a lot easier for the Rovers to use their radio to talk to an orbiter that's nearby and then to talk all the way back to earth and the orbiters have much bigger antennas, and more powerful radios that they can use to talk to earth. So. That's one of the factors that limits how often, we can communicate but, the idea of continually, improving these radios, increasing. Our data volume rates, is something. That the engineers here are working, on and also considering, your, own 2020, we're gonna have a fleet of Rovers, heading. To the surface of Mars and some of them might land near each other insight, is landing pretty close to our curiosity, is and we're gonna have to start to share those orbiter passes so, absolutely. Thinking, about technology can, you use your orbiters, to talk to multiple Rovers, at different places on the planet at the same time as I hope, something we're gonna need to really worry about in the future thank. You. Hello. First of all pardon, my English I. Understand. That Mars is really red because, of the. Iron in it how, do you deal with the camera, lenses, with. That dust, Iren, does that really sticks to the lenses. So. Some of the cameras have lens caps and in fact those pictures that Victor showed at the beginning of the talk. Had the the caps on them right before they popped off so some of them we covered lens caps some of them we, make sure at the end of the day we stow them you know looking down so they don't get dusty and in general they don't accumulate, too much dust over time and. The other, thing is that you. Know I I'm. Probably, sure that there is more than one person the likes to, know where you. Are exactly in the planet and, most. Of the time I I see the pictures and I have to deduct where, the. Shadows, are coming from because I would, love to know where. Is north and south in in in, in. Mars. Yeah. It would be similar to Earth and you know you can you can look at the shadows the same way on Mars as you can on earth it spins in the same direction.

As Earth so you'll see the Sun rise and set same, areas of the sky right. Is there any map pardon. My ignorance I, haven't. Followed this very much you, can just google both. Rovers that we have on Mars opportunity, and curiosity you, can go to the websites you can look at their traverse maps and it's all publicly, available thank. You. As. Someone who really enjoys role playing games and stuff online I was thinking that this is an amazing educational. Tool for say, training graduate, students, or astronauts, especially, in super. Exotic environments. So like I've good for CP maybe you even have a grading system where if they pick up the wrong rock you know they lose 10% maybe. They'd hate that but in all seriousness though, I see it as something where you could very precisely, train, someone, to work in a specific environment because I mean astronaut. Missions I believe they're scheduled, down to the minute ideally, because, you have so little time in so many science, goals so to, train people how to work quickly and effectively it seems like that's high potential, so do you see the ability to add I guess. Like digital, object, like feedback to where someone you can tell if someone's understanding, the concept, you're trying to teach them or if, they're grasping the right object that kind of thing, we're. Doing a lot in the area of training. All, right both for astronauts, and for operators. On the ground there's. The ability to do something before you do something is super valuable and. Yes. So the technology is improving. In terms of recognition of your work space and the completion status of, your work space and it's. Only gonna get better over time we, have a mission, to. Test, this out actually at the NEMA. Of this fall, so Nemo is NASA's, extreme environment, Mission Operations is, basically submarine off the coast of Florida it's where the astronauts, train before they go to the space station so, we're gonna test some of these capabilities what. If you had spatial procedures, in your world that tell you what to do in the, same way that you know you might watch YouTube to show you how to tie a tie or fix some, plumbing what if those instructions were in the world with you right.

How Much, better without experience be I think this, is going to be the future of how we train everything. Especially. In schools thank. You very much I. Noticed. It is past, so. If. You guys do need to take off we will be here for a little while longer to answer your questions but I, just want to thank everybody for coming today we had a great discussion and, we hope to talk to you guys.

2018-07-13 16:34

Japan guy

Is there a way to understand the stack JPL is using? Are your API's open or extensible? Are you using Python? R?

Started 12minutes after

Is Abby Fraeman Jewish ?

Very interesting talk. I'm amazed by some of the high fidelity environments that have been created from images from a rover not designed for VR/AR applications.

use the lasers to comunicate no to do damage, spread more mini satelites like mini servers and we will get a real time interact with cameras film real time not focused only in a map please!

Wow

Who play's SIM city? Please comment soon.

Just asking who play's SIM city.

Why are you here?

Awesome job JPL❤️❤️

Very interesting stuff. These technologies should become quite useful in the future.

Your Magnetic Reconnection ideas are OFF. I know you see the Fields interacting. The Earth is LOCKED in the Heliosphere by the Magnetosphere. The Gears of motion driving the Space-Time Clockwork. Multi-Layered reversed polarity counter-rotated Fields invisible to your scientific instruments. Im sorry. The Clock does work any other way imaginable. Even if I spent 30min typing. Nobody is interested in Questioning themselves. All while every other science report claims "We dont understand". I guess you have to know what you are actually observing 1st based on ideas that actually work mechanically like Clockwork to begin w/ before Jupiter is crystal clear. The X-Ray vision of the Sun's impossible rotation.

Shane Creamer WebVR was one.

Very Interesting video

You KNOW the Magnetosphere is layered. Did you know the Fields could rotate individually? . . . . *I DID NOT KNOW* but I know the Clockwork of our Solar System orbits could not possibly work w/ such precision accuracy for yall to predict future Eclipses & other Solar events by any other method imaginable. Far more impressive than any manmade Clock I ever seen on Earth. I know How it actually works.

NASA - The Earth is LOCKED between two counter-rotated Fields. Jupiter shows how the Fields are counter-rotating in overlapped cylinders. The Field flattened at the Equator & overlapped by a polar opposite Field continuously. Such fully explains the Sun as well. As the Sun rotates as a Rubix Cube in broken + separated cylinders just like Jupiter. 25 Days at the Equator then linear in perfect symmetry toward each pole into 35 Days. The counter-rotation exists but invisible hidden in the plasma. Jupiter is the Rosetta Stone towards understanding the Sun's impossible for NASA & Scientists to explain rotation. The Space-Time Clockwork is REALITY & is driven mechanically using locked into place Field interaction + counter-rotation just as Gears in a Clock work.

Cant wait for the humans to get their hands on Mars! So excited

Daniel Husivarga , they at the store boss. Many different flavors nowadays. They cost about 150% more now because reasons tho.

+Alisha Lindeen , the correct answer was "Design, As per R&D programming specs in the creation of this vector to overwrite/prevent corrupt code to stabilize this machine.

Last on first off hahaha.

Is this a microsoft hololens ad?

That's just the easiest interface to write to that is available to pretty much all the researchers.

Be still, my heart!

Way to much MKUltra going on here.

bwjcleg

Go first at moon again put ppl there and then talk about Mars... Idiots

400 лайк мой!

Great Job JPL!