Interactive ad-hoc analysis at petabyte scale with HDInsight Interactive Query

Alright, let's get started. Welcome. And thank you for coming to the session, my name is Ashish and I'm a product manager with, Azure hdinsight team, and. Today we will be spending next. One hour to talk about is. The insite interactive, query and how, you can do interactive. Analysis. On. A very very large data set that is sitting on a commodity storage, systems such as Azure. Blob store or agile, data Lake store. But. Before I kind of help you know go a little bit more deeper, deeper. And start talking about it how. Many of you have heard of agile, hdinsight. Okay. How, many have of you, have actually built a cluster. Three. Of you and. How many of you have done a production, deployment. Here. Whew great excellent. So. The if, we think about the interactive. Pipeline, and I think you know if you're working in a bi space and working in a sort of a big data space. You. Know this is the usual. Architecture. That, you that, you usually kind of come across so. For example, you. Ingest data from, various different data sources and. Then. Transform. The data you know you need to clean the data prep. The data and. Transform, it and. Then depending upon what engine are you using to work with this data as a for example if, you, are using hi, for example then you'll convert, that data into, you, RC file format, which is the optimized, file format. To, work with hi and if you are using let's, say spark then, you will convert that data into the park a file format which. Is the optimized, file format for spark to give you great speed in some, cases you would also load, that data into. A data warehouse, or a relational, store maybe you have a tera, database system the teaser based system or maybe. A you know the Oracle or a sequel and. Once, your data is sort of a lane lens in there and then, you start you know serving this data to your bi. Analyst, maybe you know data scientist, and. And and some, and so forth but. The. One of the challenge, that we are seeing with, the explosion. Of you know whole, you. Know this big data and data growth, so right now if, you think about how much total data is there, how. Much data we are producing on this planet, it's about 30, 0 byte and by. 2025. It is going to be, 163. Zettabyte. And so. This is sort of an amazing amount. Of data you. Know that you know that's that does getting pleased, and. We. See the. Implication. Of those into, our pipelines, as well because. We, are now ingesting, more data than before. We. Need to transform, more data than we ever did we, need to convert more data into these optimized, file formats, then, we, ever needed to do in, the past and then, loading, into a, store. Is. Also becoming problematic now. You think about this problem from. The perspective of you, are getting let's say anywhere, between 10 200 terabyte, of new data every single day in your systems. How. You're, gonna be able to transform, all, of that data how. Are you gonna be able to convert, all of data into you know one of these optimized, file formats just to give you some example, I was, converting, a hundred terabyte data, set from. CSV. Data. To. Work. Data. Set it, took me three. Days and a sixty. Node cluster to do that conversion and, an, imagine, you have to do do that on a kind of a daily basis you, just kind, of cannot. Kind of keep it and and. And, and so what's kind of a happening, with a lot of our customer, is that. These. Processes, are taking more and more time than. They used, to take yesterday, or a day before yesterday. Or a year before that and. So. That bi analyst, who, is now getting that final prepped clean, nice data, is getting. Getting it either, not getting all of the data or getting that very very late and so, they are actually working on really. Scale data and so if you understand, the value of the data. It's most, useful the minute it gets produced right so somebody, made, a credit card transaction it's a very valuable data.

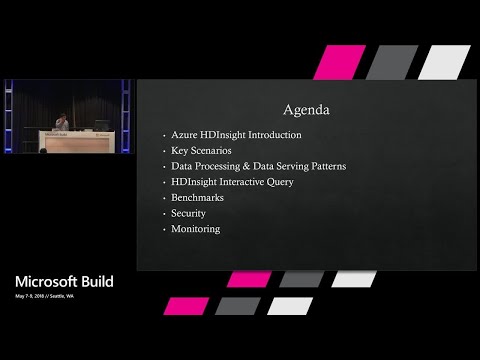

But. A week, from now it does have some value but it is like really, that valuable I think the question that everybody gotta ask, and. So the session is all about you know how can i interactively. Analyze the big data store in a cloud storage without. Kind of doing all of those steps that you, know that we kind of talk about now, do you need to do those. Step for some part, of your data absolutely. Yes I, think that the point that we're making is that when. You are receiving that you know hundred header height every single day how. Do you kind of think about that. But. Before we kind, of talked about that just from a gender perspective. We're. Going to talk about agile, as the insight some. Of the key scenarios that, our customer, uses the insight for. We're. Gonna talk a little bit more about some architecture, patterns with. Respect to data processing, and data data, surveying. We're. Gonna go deep, into interactive, query, we're. Gonna see some benchmarks so we're gonna kind of run some very. Complex queries and show, you the show. You the results and also. Talk about some benchmark. Security. Is very important, in any enterprise scenario. And so we're gonna kind of talk about the. Security, and then, kind of monitoring of the solution so. While I talk about this I think we're gonna have been a few break in between if. You guys want. To ask questions you can come up to the mic and ask questions so that other people also, hear. What a question is. So. For. Those who do not know what agile. Is the inside is it's, a sort. Of a main engine loop and spark cluster service, in Azure, what. We provide is what we call workload. Optimized, clusters. So, one of the challenges that we have seen with the traditional, sort of a Hadoop and spark world, is that there. Is lot of customization. Is needed, in order to make. Something you. Know run wall or something. Very very performant. In. A normal Haddock cluster there are there are about hundred different configuration, files and each. Configuration file has about like 100. Flags so in total about. 7,000 different configuration. And. Our customers. On-premises. Gripple with which. Setting and what to change and what not to change, because. As a service we done tens and thousands of these clusters on every.

Single Hour, we. We, sort of learned from you know all these customer. Patterns and usage and so. We bring all of those knowledge, in. Terms of you know these workload optimized clusters, so that moment somebody create, these clusters they are highly. Optimized and ready, to go we, even recommend. Our customers, is. That our, best configuration, is our default configuration. If, you, change those configuration, there then you'll be deviating, from a very highly tuned system and so do. It you are allowed to do it but then you, know they know the implication, of that. The. Other aspect, that that, we do which is very unique and differentiated, is around what. We call monitoring. And healing service so we monitor. Every. Cluster that. Run, within, a service and we when we say monitor, we, not only monitor, the. Infrastructure, component, of it such as the. The storage maybe or, the virtual machines or. You. Know the meta stores for example or. The network you, know of course we are a cloud service we got to do that but. We also monitor, the, the, open source services. Those are running within that cluster so, think about a scenario. Where you might be running yawn. And hi for example so, we keep checking the in a sort of a health of yarn and hive and hives. Are very interactive and then. If. There, is a problem, that happened with some of these foundational, components, we, built a service at the background and we call it Sybil and so. This keep kind, of a and. This, is kind of a repaired service so when we see there is a issue and it's a known issue then. Healing, said we just go ahead and kind of in a pass that cluster or fix those issues. We. Won't be able to fix all of the issues in some cases when we are not able to fix, those issues then. The healing service will, just create a support ticket and routed. To our on call and so this, is kind of a very unique in this space if you think about it. We've, a lot of focus on a Productivity and so I'll show you some of the tools if, you think about the various. Different personas, those are involved in the in the in the data chain all the way from the the data developer, who. May, be writing that spark job or a high, query all the way to, a a bi analyst, who might be consuming this data or a data scientist, who, might be consuming this data we are Jupiter. Or Zeppelin we. Kind of a work, very hard to make sure that they are productive and all of these tools sort. Of work. And. Provides great compliance, security in s LA's again we are an NF I service and so our enterprise, customers. Keep. Us awake and make sure that we are making it once advanced. Is there and so we're gonna kind of talk, about that we. Have a curated, Big. Data marketplace, again if you think about this space. It's. A very nuanced, space. Every. Customer. Kind. Of has a slightly. Different needs, and. Thereby. There. Is a great. Desire to have bring their own tools for example or their. Own solutions. That they kind of want to bring, on top of HD inside and so we have a build. Is curated, Big Data marketplace, where. We. Work very closely with some of the some of our partners and. Our. Customers, can. Very, easily provision, these applications, on top of HD insight and and. Take advantage of those. We. Have a per minute billing model with no special. Services, and support contract required. Again. If, you know open source open source is free right it's you can go to the, github. And download all of the open source you want. And. That's that's, how the business model work the. Way people kind of make money in this business is at the back end of it by selling the you the support services or the or the or the or the consulting, services and whatnot, in the, in the case of ace the inside we thought you, know that.

Is A kind of a big problem and we we. Need to solve that so we there. Are no special, support required not only for the sort of agile bits but also for the open-source bits we provide support on entire. So, if you have not seen this before you could do all of this with an. Template, or a, CLI, or, a PowerShell. So. Go. To the data in analytics. You. Know click on, sort. Of a cluster type and so, we have a Hadoop HBase storm spark, our server Kafka, and then interactive query that we're going to talk about about today. Then, if. You want a multi-user. Access and, rule-based control. For your cluster, we. Have, something called enterprise, security package, and so. This basically provision. Ranger. As part of your cluster creation, and so you. Know you can basically define, your. Policies, and we'll. We'll talk about that, now. One. Of the important aspect of is, the, insight is that we do not use. HDFS. We, basically. Rely upon the. Cloud storage systems such as add. Your data like store or add, your blob, storage for. The storage needs we. Do have a sort, of a local, HDFS. That, is there in the cluster but. That's typically, only, used, for you, know keeping the intermediate. Job. Results, and things of that nature we, do not recommend using. Using. That. So. These storage. Systems are really, really great in a sense that they're proven, at a cloud, scale a lot, of time we hear complaint, from our customers, that on-premises. You know sort, of a managing. And scaling, HDFS, is one, of the hardest bit of this, whole ecosystem, and so in. A cloud because we offload that to you, know fully past, you. Know past services that, kind of make you know three copies of your data anyways, it. Gives us a great, advantage and, then it also helps us do some something, very interesting which is decoupling. Of your compute, and and. Storage. And once, that happen you, you, can have a scenario where you have a petabyte of data sitting in in a blob store and it's. You. Really, not paying a lot of price and then, you may not even have a sort, of a spark, arrestor or high, a new, cluster running on top of it which, is a great agility, for, our customers. Then, you pick up notes, in terms of you know what kind of a note that you need for the. Worker node and a head node and zookeeper, in this example, and. Then. We'll. Let you pick you know one of the marketplace. Application, as I talked about, for. For, very specialized, needs there and. Once, you that once, you do that your cluster will. Be up and running. Now. Once people create clusters what, do what do they do with it, so. The key scenarios for us one, of them is. ETL. And batch. It's. A it's a quite classic, scenario for these kind of systems. Interactive. Exploration where. People use hive. On LL ap which you, know we're gonna kind of talk about today or spark sequel. Data. Science and machine learning. Streaming, this is very popular scenario, a lot, of our customers sort, of a you.

Know Get data from a, various. Line line, of business systems or, IOT. Systems. We. Are event hub or kafka and then. They do stream processing. Using. Storm, and spark streaming traditionally. Our. Customer, use, storm, quite a bit but, we see a clear, trend where, people are favoring, spark streaming, you. Know for the streaming needs and then, once they process, the data they. Would put data into HBase, for hot. Path analytics, or a cosmos DB for. Analytics. And then, for cold path they'll just dump data into, Azure. Blob store or as your data leaks store for for, further processing, and, then, the last one is you. Know and we're seeing quite a bit of this in last, last. One and a half year where people already. Have a sort, of gigantic. Horn, world sort of cloud, like cloud, era clusters, and they simply want to kind of a migrate them to as the inside so those are you. Know kind of a key scenarios that. We see but today's focus is going to be on an interactive, exploration part, of. So. You. Know talking a little bit more getting. A little bit more deeper into this, idea, around. Elasticity. Of the cloud where, you can separate. Your compute, from storage, and. As I said we do not use HDFS. So all of your data can be stored in agile. Blobstore, and agile, data Lake store and you're practically, getting. Infinite. Infinite. Amount, of storage. In, terms of how much data you can you can store there you. Get a very high level of bandwidth, so. In the newly. Announced. Tier. 4 Azure blob storage you. Can get up to like a 50. Gigabit. Per second, bandwidth from one storage account and. They have a plan to go up 200 gigabit per second by end of this year, you. Know so from, a bandwidth perspective, and. If. You are using Azure data like store as, your data Lake store you. Know they the. Claim is that there is no, bandwidth. Capacity. Or, I ops, limit on those stores so they're really really. Sort. Of a scalable system in terms of where do you store your data in, terms. Of the the, hdinsight cluster, you. Know then you can scale. Up and scale down this this cluster, you. Know doesn't really matter or. Wipe, this cluster off doesn't really matter because your data is in the in the in the blob store. Not. Only you can do that you can do something very interesting. Interesting. In a sense that you can actually, bring, multiple. Different. Clusters. Against. Same data and same metadata, and we see that's kind of happening quite often you. Know with our customers, in. A sense that earlier. There used to be one gigantic, sort, of a cluster, and then everybody, you, know try to use to come to that one cluster, and. So the workload management used to become really really challenging. There. Used to be real resource, contentions, among users and. So what's. Happening now is that people are creating these user. Focused or a department focus, focus. The purpose build a. Smaller, cluster, talking, to the same data and metadata. And kind, of serving that. And. So you know there are three, promise. That, this architecture, kind of makes to you one is compute. Is stateless our on-demand. Storage. Is cheap and limitless and, network. Is missed and so when you add more no to that your cluster or add more cluster to your architecture, you. Get more bandwidth, you know as you as you kind of add more node with, the underlying storage. So. What what what kind of patterns, then you know this kind of architecture enables. So. If. You if you think about this this, diagram, here. Especially. From a data processing and data serving perspective, our. Customers. You, know typically, separate, their cluster into, what. We call on-demand, processing. Cluster and then, data, serving cluster all, of them server. Talking to the same underlying storage, and then. Talking. To the same common metadata, store. So. So. If you think about the on-demand processing, cluster what. Would happen is that let's say your. Pattern is that you receive data every one hour and then you need to process that data, so. You could, you know you could get that data, into the blob store or add your data leak store and then. You can orchestrate a, cluster. Creation, you. Know is the inside cluster would be created for, you once. A cluster is up and running then. You can run any. Kind of a processing framework that you may want to use after that right so you may be we may be running spired hive, mr. Pig you, know doesn't really matter once. You process the data and put, data into let's say output directory or some other directory, and. Put metadata into the metadata store and. Then you tear down the cluster. 90%. Of the hdinsight, clusters, do not see second day so. This is a very prevalent. Pattern. That. We see with our customers, now. How do people orchestrate, this piece, so. From a Microsoft site if. You guys are familiar with a Geo data Factory so. A lot of our customers basically. Use agitator. Factory to do this, orchestration. The murders have written their own orchestration. Because you, know all of our API is our rest. Base and we we provide, our base template so, there are few who you know who have done it themselves.

Now. This is a great, thing. From a, cost-saving. Perspective. Because you, know you're not keeping, your cluster, up and running 24 by 7 you. Are using purpose-built. Very, optimized, clusters, you, know they go crunch the the data and then they go away and customers. Save a lot of a lot of cost because of that. Now. The second, pattern is around, what. We call data serving, clusters. And, and. Think about this scenario you know we all represent very, large organizations. With, lots lots of departments and, lots of personas. And. And. What. A tool like by maybe, one data scientists, may not be like by like other data scientist, I. Like, spark you may like hi. Somebody. Else in the organization may, like presto. And so. What. You could do with this architecture is that you know bring all these different. Technologies. Again. Same data and metadata, and then. Have all these different, personas, sort of a you know come to or come to edge the insight and. And. You can serve them very very well you can independently. Scale. These peers you, can add sort, of more clusters, more computing power if, if, you think about a department. Within your organization, you know it's pretty big into the analytics and they. Need quite a bit of read so you give them quite a bit of it right then there is other other. Person a single. Person trying to you know drive drive, some analytics, you give them a very small sort. Of a cluster with, the technology, that they they kind of want and so we we kind of a see it very. Very well now. Once you have this architecture. It's. All about personas and how. How, does these personas sort of interact with these systems and so. The way we kind of think about it we think about it you know hey, what your bi analysts. Would need what. Would power user, would need what, data engineers would need what, data scientists, would need and so, for example from an analyst, perspective. We have invested pretty deeply into power. Bi excel. We, work with tableau for example, DB, where and. I'll kind, of do some demo with, power bi and especially there is the inside interactive, query that we're gonna talk more about today we. Have a direct query can, direct. Query, connection. So, imagine you. Receive a new CSV file in your blob storage and, in, your dashboard. Kind of refreshes so we, will. Show you that. Then. For power users. ODBC. JDBC and, beeline connectivity. Developer. Productivity is, very very important, for so. We have made investments, into Visual Studio code, how. Many of you use Visual Studio code so. There is a there is a is, the inside plug-in for Visual Studio code and so you can write like a spark code you can write hi, code into. Into. Individual, individual story with loosely a code and then you can kind of a you know some of that code to, the cluster, with. Respect to the IntelliJ. We. Have done very deep into integration especially, with spark where. You. Can actually run. A spark, program in is the insight. From. IntelliJ. And and. Put a breakpoint right, inside your driver. Program an exterior program which, is amazing if you think about it some, of these spark, programs and how difficult it is to kind of a debug and get them right and. So this, this debugging, support inside, IntelliJ, you. Know sort, of it helps are, some. Of the developers working with our platform to be to be to be more more, powerful and then, of course Zeppelin. And Jupiter, notebooks you know that kind of comes with this this interface. Alright. So this is great but. Why is the inside interactive, query. So. One, of the one, of the thing when, I started, I talked about this problem, around. Ingesting. Sort of a lot of data you know every day we are getting a lot of data and this, whole pipeline is becoming more and more expensive every single day and, in. You, know serving, fresh data is becoming more. And more hard. Every day, but. The second problem is around as, I, said we use cloud, storage right. We use blobstore. Or a geodetic store these. Are amazing. Massive, scalable. Storage, systems, but. They are remote storage at the end of the day right so there is a latency. In wall they are 20. Milliseconds. Away from. The cluster and. So the question becoming how do you give very fast response, if, you have you. Know, ladies. A latency problem as well so. Let's kind of a you know get into a demo. Let's. Switch, to the demo mode. Can. You guys see it. If. It if it in the back it'll, probably make. It a little bit bigger can, use can you see it now in the back otherwise. You've got more, than welcome to come in front. So. This is a is. The interactive, query cluster, and. I. Keep my clusters, named like do not delete because my colleagues, are very conscious. About the cost and you know they're ready to delete things and. So. This. Cluster, if you look at look, at it here in. The worker node it's. A four. Node cluster, in. SA and then. We have a two head node and three zookeeper node we, keep two head node for high availability needs, so let's say you're running a job and one.

Of The node goes down you. Know we just fall back to the second, node and nothing. Happens to your your. Job, now. This, cluster, I actually used the. Agile. Blob storage so you can see there couple of storage accounts. You. Know those are those, that are associated with this cluster. And. You. Can actually get into the sort. Of a storage account and see all of your data so data is living in a like a blob store as I'm showing you and, we have created a is the inside cluster on top of it. Now. Let's kind, of a start from very basic. So. In this case what I want to do is that I have, a very simple, CSV. File and, this. Is basically. Data, that, I downloaded, from Zillow, calm and that, basically tells you in. In. The cities in, the, United States where, you're gonna see the most appreciation. Of the real state right that's what this data this is CSV file I can't download it, now. My goal is to like start. Interactively. Analyzing. This data it's. A very small data set but I just want to get the concept. Just. To. Get started and. Now I am in what I call like embody. Dashboard. For. Hdinsight cluster, now, embodies, has. A sort of a multiple function one of them is cluster. Management, and monitoring and then, it it provides in a bunch of tools for you to do easy, things how many of you have heard of somebody, before okay. Cool. So. What, I'm gonna do are, we gonna go to something called high v2 data. It. Is basically interface, for you to kind of write five, credits but before I can write like a sequel, like, queries. Against against, that data I basically need to create. A high table on top of it so. I'll click on this new, table. Select, this option called upload table and. In, this case my data type, is CSV. And. First. Row was header now. This data could have been in. A blob store or a your data Lake store and. If I select that I could have like a given, the path in terms of where where the data is but. Just to make it super simple I just gonna kind of upload it from my local. Machine and so. This is the CSV file I just gonna open it. Now. A moment I do that. This, tool is able to figure, out, you. Know what are the. Like. If you look at it here, so. There is a region region name state. Forecast. It, was able to extract. That metadata, out of that file. And. Then. I can provide you, know some more property for example I won't result in fowl to. Be saved as text for. Example. And. Let's, hit, create. So. What this essentially doing is that it's basically first. Uploading, the file putting. Putting file into the blobstore, and then, basically creating. Hive. Schema, on. Top of that again this is a it's. A. XML. Table so your metadata, kind of lives in a relational store and data, lives in a blog store so. So, we did that and now if.

I, Start. Writing, something. Like select, star from. FC. Because that was the sorry. The. Table name and. Just. To limit. Run. This. All right so. Now you can see that I'm. Able sequel. Queries against, you know some CSV. Data you. Know you know in a very very quick fashion. Now. Let's say if I am a data. Scientist. And. I'm using, Apache. Zeppelin, again, this is connected to the same cluster and. I, can run the same queries. Here. Let's. Make it let's, make it visible. Yeah, and, that output, yeah, so. Now I'm seeing, the same data in in, Zeppelin but. Then Zeppelin also provides, you. Know some visual, charts. And. Then. You know I'm kind of exploring. That data visually, and I can see that okay if, I look at this if. I spend my money in Atlanta, I probably gonna be more richer next year this is very simple, use case. But. Then you're gonna say that hey didn't we came here for a very, large data set type problem, these are like a little Excel, files or CSV file files, that you're showing me, so. Let's switch gears a little bit now. On. The same cluster. That, I was doing this work. I. Have. A data. Set. Called. TPC. Diaz underscore, org so this is a drive. Benchmark. From TPC Diaz you guys know, the TPC Diaz, so, TPC. Diaz is a industry, benchmark, for. The. Data warehouse type. Systems, there. Are very, complex, 99. Queries, that you kind of run against a. Data, generated. Via, this benchmark and then you say hey how fast and how slow you are, relative. To. Anybody. And so this benchmark, is actually derived, from. From. The TPC Dias benchmark and so, what it has is is kind of like a bunch of sort. Of a different tables for example it. Basically mimics a stall scenario, so you can see the call center catalog, page and all of that so so that is the thing and so. This data, TPC, Diaz underscore. Org. This. Is basically, again. All. Of this data is in RC file format in this case and stored. In a agile, blob store and. Before. This demo what I did was I created kind, of a schema. On top of that data schema on Reed so, you know the external hive tables and store, metadata in into a meta store for. But actual data is in in the blob store in this case. And. Now I gonna use a tool called Visual. Studio code. Again. This is the integration that I was talking about where. You know you can write the. Queries in a Visual Studio code and submit, it against the cluster, and so. The, query that I'm using here, and let's go against the database that I have tpc, das underscore, org. And. So. What what, I'm doing I'm kind of using that that database, with. A hundred terabyte of data and, then, writing a very complex, query where, I have a couple of aggregation. And. Data coming from you know four different tables, and then, there is a group by statement, as well, so. Just kind of kind of a run this query, and. Now this queries being you, know. Let's, see what happened. Okay. So. Yes it's. Kind. Of executing, the query and. It's. Very nice interface because it can be entire. Dag information, so, as you can see that. Query, took. Four. Point five seven seconds. To execute. Granted. It did not scan, 100 data byte data but if we look at the.

The. Dag. You. Would see that. Even. On like a map when it, scanned about 67, million records it's quite, a bit of data. Sitting, on a underlying, blobstore. And very. Very fast speed. But. You would say that didn't. You say that hey. Converting. Into this work. And. Park', takes a lot of time and, of. Course we got a faster performance but didn't you run this query against, you, know that that, optimized file format which is work. So. Let's change this a little bit. So. Then instead, of a going, against, the work. Database. As. Part of the same. Cluster, I also. Have, this, database called TPC, des underscore, CSV, and so. The difference, here is that the, the files now, are in just CSV file these are just CSV files again. 100 terabyte of them and I. Now. I gonna write this query. Against. The against, the the, CSV, files and these are non optimized files. So. Let's, run them again. It's. Running. So, results are back in. Five. Point four seconds. And. So that's the fundamental. Game. Changer, if you think about it. It. Was never easy to get the good. Performance out, of text. That's why people converted them into optimized. File formats or, called, park' or ever or something like that but. If you can't get this level of interactivity with, such a huge data set on just. A CSV files or adjacent files and xml files. That. Kind of opens up the scenario. Where you know you can just directly, query. The raw data that's sort, of sitting in a you know in a blob store and nearly alas and give, very very fast performance, to. The to the people who are trying to kind of get to this data. So. I just want to pause that for a moment, and see if you guys have any questions. Yeah. So that, so. The question is hey, the csv file that we saw are, they all stored, in a one blog one. Blob or is, there any partitioning, on those no, there is a partitioning, so we, did store that we did partition them by date yes. So. The so, the the, question is that hey how much time did.

It Took you to, convert all 100 terabyte of CSV data into. Into. All file format. If. I remember it correctly it, it took me a 16, node cluster and three days to, do that conversion. You prepared contrast like made of bricks and sparks equal with. Yeah. Yeah. So that's a great question and question is that hey if. You say that you run very fast, but. You do not compare yourself with anybody. Else then how do I know right so, we. Have some benchmarks. That we have done and I. Have. At the back of my slide so we'll talk about that once we get to that. So. So. The question is we. Load your data into HBase for faster query and. Instead. Of a HBase, can we use interactive query for querying that data well. HBase. Is really. A great product if you have sort. Of a you know point scan or you're scanning. This, you know the sort of a smaller, amount of data and you, need those results to come back in like a millisecond, range okay. Interactive. Query is you. Know the expectation, here is from few seconds to five minutes right but on a very. Large data. Set and in, large tables and whatnot, so again you depending. Upon what your expectations. Are you you, can definitely kind of think about that, did. You question the back. Find them myself. Yes. Alright so the question is that hey if I'm receiving every, second day I receive, like these multiple, of these CSV. Files do now do I need to aggregate them into like one file to get this this thing. No. You don't have to but, the understand, the the. This, you. Know the Hadoop word and its, problem, with like a small files right so if you have lots of, small. CSV. Files. You're, gonna run into performance, issues so the question is that Kenya I query of course yes but. Will, I run into performance issues with lots of small file answer. Is yes too. Yeah. Okay. So the question is that can this automatically, combine. You know those file answer is no you'll, probably have to learn some pre aggregator, process. That. That, that kind of combines it but you know the the interactive, query in itself doesn't have. You. Know the native like but out of box capabilities, that will combine files. All. Right. All. Right so I think you. Know we want to kind of get it get to this word where you are ingesting and you know serving to your your users right that's the whole point so what, is Internet, is inside interactive query so, it is built on. High. Low latency, and analytical, processing. Before. The name was, long live and process, we. Figured out that it's very hard to solve with that name so we change the name to interactive, query and. So a lot of people kind of understand it. Search. Query directly from Azure blob store or Azure data Lake store works. With text, Jason CSV TSV or Park a everyo. File formats, super. Fast performance with text data which I showed you. The. Scalable query concurrency, architecture, now some, time when we think about these systems, we. We, think about in. The notion where there, are like one user or five users trying to kind of hit these systems, but. If you think about the big enterprises where they may be you, know hundreds of users hundred.

Of BI analysts, you know trying to kind of hit these system at the same time and, then, how do you think about the concurrency, in and, how these engines sort of you, know work from a concurrency, perspective, and, so we have found that in our testing. Interactive. Query works really really well with the higher, level of concurrency, we actually publish a benchmark, around that as well and I'll, show you and then. From. A security perspective you, can basically. Secure it with Apache. Ranger in. Active, Directory and we'll, kind of a talk about that a little bit more. So. How does it work from, an architecture, perspective so when I issued, that query where, I you know wrote that TP, CD s query the. Query went to a component called, hive server interactive. Hive. Server interactive, has, you. Know two functions. One of them is you, know query planning, optimization. All, of that and then, the second function it has is a security. Trimming so this is the place where. It. Will check you know whether you have a permission, on a certain table column. Rows and. What those are so all of that work kind of it happens there. These. Ll ap demons are nothing but you know just kind of a cluster nodes and. Then. They talk with underlying, storage. And and. Metadata store for data. And metadata operation, now. One thing that is very interesting, here is that. These. Nodes have ramen. Now like a dynamic Ram the. Demo that I was showing you used e14, we, to VM from. From Azure and it, has 112, gigabyte of RAM for example, but. Then we also utilize, the the. Local SSD that kind, of comes with the cluster and so. For example with a D 14 V 2 vm i get, about 800, gigabyte. Of SSD right that we, don't charge and so, even, with a 4 node cluster I have. A quite a bit of a local cash. Pool if you think about it right I will. Over 400, gigabyte of RAM and then I have a close to 3.2 terabyte, of local. SSD to, to. Work with and so. If we look at inside, a daemon, ll, EP demon. There. Are these, multiple, executors. That. Can execute. The. Fragments, in. In, and. Kind of either receive the data or do shuffle things of that nature and. So it's a multi-threaded. Architecture. If you think about it if you compare this with like a hive architecture. It's all serial, single-threaded but, here you have a server multiple threads trying. To get data if you look inside the, executor then it. Has this notion around. Like. A record, reader and then, it. Basically you, know sort of plans, and. Tried. To read the data first. It will try to see if data is in the cache, metadata. Is in the metadata cache if, it doesn't find it gonna, go and kind of a hit the underlying blob, storage for that data. Now. One. Of the important aspect of this pieces is. This. Idea, around intelligent, cache and, so. As I said caching tears are your DRAM. Your. SSD, and then you know the last one is where your actual data is that is Azure, blob storage, now. This cache caching, mechanism, is intelligent, and it does automatic, tearing so for example, let's.

Say You receive, the like a new, CSV, file, in, in the blob store and user. Issued a query, so. Ll. Ap is intelligent, enough to. To. Do a lightweight, filesystem. Check to see if. If, on that container there is a date modified or, on that file if there is a date modified if there, is then it can kind of load that data into the into, the memory again and so. You. Are always working with the fresh data and you, are not in the business of sort of maintaining, the. The. Cache engine. Does that for, you which is which is very remarkable if you think about it. Now. How does cache. Get invalidated. Or how, you know how do you dig the cache. So. By default the policy, is lethal, last. In. Last. In first out. But the, caching, policy is pluggable, so if you want to kind of further extend, and have your own policy, you, you definitely can can. Do that. All. Right so the question becomes you know how fast how, fast it is, so. So. For this test we, ran a. 45. PPC, des queries. Drived. From you, know TPC Dias benchmark. The. Reason why we then 45, because, if you think about the engines that we we. Looked at was the spark, presto. And interactive. Query which is a little a Peter. These. Were the 45 queries that all of these could run without, any modification. And. So that that was our criteria, we didn't wanted to modify any of these queries and. So in this case. One. By data set and then. We, used 32 terabyte, of sorry. 32 node, cluster if you think if you if you look at their and data, in this case was sitting in a jab Bob store, and. So. If we look at the results, in terms of the total time for. Running these queries. So. The first one is, ll, AP which is interactive, query with the text which. Is. 1478. Seconds. If. You look at if you compare that with the spark for example, that. Was 1500. 3 seconds. Again, this wasn't the data brick spark this is a spark that's running within. Them. Those. Are seconds, yes. 445. Tptp seediest queries and one, terabyte data set. And. So of course if you think about n.l.a.p with a cache data. It was 1060. One second that was the best but, that wasn't the like that was okay we we kind of knew that that might be the case but, the most important aspect to us was hey.

How Fast it, is even, with the text, it. Was the second best and that was you know that was the that. Was, 1478. Which, was the second best in, this in this benchmark. And. Then just. For interactive, query we, ran a, hundred. Terabyte, TPC. Diaz dragged. Benchmark, as you see there we. Were able to run all the 99 queries unmodified. 41. 41, percent of the queries got finished within 30 seconds, and 71. Percent of them came, back within. Within. Two minutes and then in this case the cluster was like a hundred, node, cluster how. Much how much money did I spend to run this benchmark in. This case it was $800. To, run the hundred terabyte. Benchmark. Alright. So. The speed, is is, great, and a lot of our customers, you know who are using it are very thrilled about it but. How about the concurrency, because again. These are not the single user systems you know you're gonna have a multi. People multiple people, in the organization, and hitting, these systems at at the same time and how, do we think about the concurrency. So. In this test. Again. We then ran all, 9090. Pcbs queries in different permanent permutation combinations, our. Goal, was to complete, the workload, as fast, as possible, meaning, that we wanted to be able to run, 99. Queries as fast as possible so, in a test one we, just pick one query and you know kind of let it run in. A test two we ran two queries at a time so first one we. Ran a query we let it finish and then started a second write in, a second test we said two queries at a time and then, we kept increasing the concurrency, all the way up to test seven when where we ran. 64. Queries. At a time and. So. If you look at the results they're. Very interesting, so, when. We run like one query at a time in, a serial fashion. It, took us the longest amount of time to finish our workload it was. 1640. Seconds. And. Then it progress progressively. You know kept coming down. All. The way up to where. Our concurrency. Was 32, and so region-wide was giving. Us like a better performance, with higher, concurrency, because the, the previous query would kind of bring data into the cache and then, new. Carry new query would kind of utilize that that that cache so, that caching. Actually, you. Know he'll, help. Speed up quite, a bit at. Concurrency. Level 64, we started seeing a little bit of a degradation and, this, is because you know there is a setting, in the cluster where you said the max concurrency. Of. The cluster that was set, to 32 so after 32 our, queries, were sort, of being queued. So. We. Do have a customers. Who are kind of trying this in the level of like hundreds. From, a from a concurrency, perspective. Now. If you think about it hey, what if like I have a like a thousand, people you know can they hit this in, a concurrent fashion, answer. Is probably no these systems are not designed for you know that kind of a use case in. That case people would use our lab base or a sort of a cube based technology. Maybe. Analysis. Services in the case of Microsoft, or Apache. Druid in the case of open source to, kind of think about you know those you, know thousands of users where, you you, serve them aggregates. You. Know from from from that perspective but, this was this, was you know within the interactive, query. So. Which one which, one we should pick the three great technologies, right interactive, queries sparks, equal presto, all. Of them kind of a worked really. Really well I mean they. Could be little slower and faster you, know depending upon you know who did the who did the tests or. Or, what version that we picked, but. I think the thing that we, really like from interactive query perspective, is this idea around, intelligent.

Cash Eviction, so you're not responsible. For. Maintaining. The cash right the system is is is. Maintaining, that cash and then, the second aspect was was was kind of a query concurrency, where, you can run lots. Of different queries. You. Know against against the same same, database now. All. Of these benchmarks. They, are all, they're public, so, we already have a blog post that talks about it we. Also publish. A kit. So that you can generate the data and run, these queries and. It's very, very simple people can sort, of a set this up under ten minutes, of, course it will take time to generate the data but at least you can set that the test kit in. In one minute it's. You. Know it's on github its github, slash is the inside and you're, gonna kind of see that the tests get there. Alright. So it's great. That, you. Know we could do all of this good stuff but. How about the security right, because, again, our customers, our enterprise customers and, they. Really want to make sure that you know there is no authorized access to the data and. Their system should, not be compromised, and, so. The way we think about security. And as the inside I think. The. Parameter level, where you, can actually put these clusters, in inside, a virtual network with. A very strict, policies. In terms of you, know which who. Can access who. Can even come within that that parameter. In. Terms of the data storage your data sort of a lives in let's. Say Azure storage or agile. Italic store they, provide you capability, around, encryption. At rest, with. Microsoft. Manage keys as well as the keys you know that you may want to bring to encrypt the data then. We use HTTPS, for you. Know the the. For the encryption in in in transit, so your data is kind of a very, very secure from that perspective, now. For authentication. There, are two aspects to, it one. Is around. Where does your day your identities, live. So, we have taken dependency, on Azure. Active Directory, you. Know that's where all of your identities live but. The challenge, with that model, is that if, you think about all of these technologies, they understand Kerberos, whereas.

If You think about as your Active Directory it understand, what, so, there is a component, of an agile Active Directory that we leverage, call. The agile. Active Active. Directory domain, services and. It's basically a sort of a DS Active. Directory server then. You sync your users, from Azure. Active Directory to this-this-this. Active. Directory server which. Then you. Know provides you with, the Kerberos tickets for example so that's for. The identity. Management. Now. In terms of the authorization, we, use Apache Ranger, how, many of you ever heard of Apache Ranger some. Of you okay, so Apache. Ranger is a, policy. Management. Product. So, you could define a very fine getting policies, in terms of who. Has access to. Let's, say which tables which rows in which columns and I'll. Kind of show you demo. So. Architectural. II how does how does this setup kind of works right so. You. May have your users on-premises in in your directories. You. Will sink those users with, your, Active Directory, then. Further sing them with agile, actively add your Active Directory domain services for. Kerberos and that's. What is the inside customer, talked to in. Terms of getting those users. Then. For policies, it goes to Apache. Ranger and. Then kind of talks to, underlying. Data store in. This case agitator. Lick store. So. If we were to kind of show you something, there. So. I have a cluster that I already created this is a different. Cluster with. Our enterprise security package, meaning I have. The identity, management as well as the the policy, management with Apache Ranger as you can see right there. So. If I go to the Apache Ranger, well. Let's first go here and look, at some of my, tables. All, right so. I, have few tables here and, let's, say I. Want. To see some data so let's start, from. Very, simple query in this case I'm kind of a you know logged in as a SDI user okay as you can see there and, I'm, executing that query and. It. Will bring the results back and as. You can see I can see you know all of the data here, right now. Now. I want to go to this Apache, Ranger which is the policy, administration polo, where I can specify who has, access, to what and so. You. Can go into high. Policies, for example and and. Define policies. You. Know who has, access. To what data so that's access policies, then. You can have a row level filter which is very interesting so if you think about it security, in a traditional sense you. Would kind of a create views on, a table, and then, assign. You. Know some kind of a security there it's very neat here you know you don't have to do any of that you can have your your, your sort of you know the filtering and policies.

Applied, For. Multiple personas on the same table so you don't have to create, views for that now. The one that I want to show you here is a, policy, called masking, policy, and, so. The. Policy that I've created on, that. Hive sample, table. For. This user called tests user one. And. If you look at the option I want. This. Device make column, to. Be redacted I don't the. Device make is the kind of easy thing but think. If this was a credit card information for example and you don't want it you know a credit card to show, to a test. User in. This case so, I already, had this set up here now. Now. I'm that user, one and I'm coming. From. A tool called DB where it's a DB word is the Apache tool. You. Know you can actually. Query. Data from anything. That support ODBC, JDBC for, example right so I kind of connected my, my table here and. Now it's, user, text, user one and trying. To kind of a reap data there and. My. Device make, should be redacted, so as you can see here. My. Device make is redacted, because, I'm just a sort, of test user and I. Shouldn't be able to kind of see that data. Now. Let's say I am a another. User and for this, I will go to, my. Desktop that is sitting in Redmond and. Let's, say in this case I, am. A a power bi used okay I'm using power bi to connect, to the interactive. Query that I just showed you. It's. Very interactive if you think about it like so, if. I click, on some. Of these things, very. Very interactive and all. Not none of this data is basically cached with power, bi we are using a direct, query connectivity, so moment I'm clicking anything the. The queries are actually going back. To the cluster and that's how this is being served but, you can see it's it's very very fast but. The point that I was showing you from the security demo perspective. Those. Redacted. The. Device make is still redacted and so you. Can have a multiple, different, personas, coming, to the system from. Whatever wonderful. Beautiful tools that they like but. The security, is kind of applied at the, hide several interactive level, so it doesn't really matter from where they come you. Know they can they, can they can totally come here so. I just want to pause there for a few, second and see if there are any questions. Okay, so the question is that hey the query that he showed you in a power bi is there like a very simple table and you're. Showing that answer. Is yes is this a single table and just a select statement. Then I'll actually show you the query in the back and in terms of in. Terms of how it it kind of looks like but. We have, a scenario, where people have kind of build these dashboard. Using very complex, queries and, and. Then, the question becomes how fast are they right so. Yeah. So how, interactive. This really is so. Again I cheated. A little bit and I kind of a click on these things before so meaning data is cache in the memory and thereby it's very very fast let.

Me If I pick something let's say in this. Case maybe. Tennessee. Okay and, you. You can see this a little bit of a loading. Going on okay, and so, because this data is getting, from a like a blob store it's, it's taking a little bit of time. So. Let let's give it like maybe, a second more. Okay. Let's. See, so. Any, user kind, of doing this query gonna see this behavior that you see right now but, anybody else after that hits, on that Tennessee, gonna get very fast results, because the data is in in, memory and data, is in in in cache. Yeah. So. So, the question is how do i warm the cache and so that everybody, has the good experience, so. Two. Answers there so far now our customers, kind. Of a look at if they understand, the user patterns and what kind of queries that they would run they. Would just run that query in the morning write those quite in the morning and so thereby cash is all warmed, up. I, think, with, a community, we are working. Working. In a way where we can kind of a detect like a commonly, used queries. For example and then you know how do we kind of auto cache them for. Example but but, yeah so that's the kind of a behavior that. You would see now now it's it's, kind of a quite. Still. Not from this one for some reason but yeah. So. Now I've, been kind of clicking a lot of things so, if I look at the if, I go to the cluster, and then. Look at the test view where all of these these, queries will be recorded. So. Every, click that I was making is. A, is. Recorded, here and you can see the the. Kind of query that that that that generated. From. The power bi. Yeah, so the question is that hey I wanna kind of a restrict user let's, say by state right so. What you would do in. That case is that in. Apache arranger, let's. Say let's. Go here and, in. Apache Ranger. Let's. Do. This. Policy, there. Is something called row level filter, okay, so. You can create these row level filter, and let's, say that row level filter is on state, in that, case let's say state is California and. You. Want to kind of a make sure that a. Certain user only see data from, California. For example, so. That's how you could do it if your question is that hey I want to kind of restricted, based upon from, where people are hitting it I. Don't, know if there is a kind of a good solution that I know top, of my head right now. All. Right and then. Again. As the insight is a like. A five-year-old, service we've been production for five years and we work, very very hard to, remain compliant, with various. Different compliance, needs you. Know from agile from various. Different government bodies in. And. In. You. Know the the compliance, authorities. So. How about monitoring, and operations. So. As I. Said you know they're our, best performance is without out of box, cluster. So we don't expect. Our customers, to do any kind of optimizations, there we. Monitor, and repair your clusters, and services and then, we provide SLA, on the on the entire solution. We. Also from. A monitoring perspective you think about all of these endpoints these. Are, actual. Open source endpoints so for example you. Know if you think about the HBase in this case, so. We're going to be kind of a. Checking. The health of that component and if there is anything, anything. Bad happens or any issue happens. We just go and take a corrective action and fix it before our customers, kind of even know it and this, is just one one of the internal diagram that I'm showing you so. This is service called Siebel and so, what you're seeing in this diagram is that there are like, a 250, machines or 250, clusters got impacted, with some bug that got introduced in one, of the newer. Version of Python and so. We detected, it and, our. Our. Automation, was able to kind of fix that and you know that issue went away for our customers, this. Is the. Same thing but on a in, a sort of per cluster view so. We. Kind of a threshold. Let's say something is down for 15 minutes then apply the mitigation, so in that case that threshold was hit and mitigation. Was applied a severity, for ticket was created, it, was mark and marked, as resolved and then, you. Know we, have it for our sort of auditing packages in terms of you know what kind of happened sometime, this service won't be able to resolve some of these issues so. It will just mark it and resolve and give, it to the on, call for example. This. Is just an example of how, our internal.

Dashboard Kind of looks like on a sort. Of a per cluster basis. Now. It's. About our monitoring, we're gonna monitor infrastructure, we're gonna monitor the the open source bits but, how are you gonna kind of monitor your applications and, how you're gonna monitor, maybe, you know how much cash I'm using and, do. I need to add more nodes for example, if I'm running low on anything so. There are sort of a first-party tools such. As graph, on R for example, embody. That I showed you but, we have also done integration, with agile, Auggie analytics, so, all of the metrics and all of the data from. Your cluster, can, go to as you log in genetics and then, on top of that you. Know you can do very sophisticated in, analytics. Not only with your metrics but also with logs. From. Your cluster from your application in. Terms in terms of what's kind of what's happening there and then, we have build some. Very specialized, dashboard. For you to kind of you know go, look in look. Into in terms of what's important, for a specific workload, and what to do there. As. I said log analytics, is a very sophisticated tool, for. For, for for. Trying. To find patterns trying, to find issues. And. You can write very sophisticated. Queries. In terms of finding issues. You. Know inside your cluster we, use a version of it ourselves we call it internally, costo at Microsoft, and all, of our. DevOps. Engineers. And our engineers, kind of reused this tool in. Order to troubleshoot, our customer issues it's. Great, that we have now made it available, to our customers, and so, that they could do the same kind of a DevOps that that that, we do on our side. Now. How. Do we use it so. We basically. Push. A flow, and be agent. To all of the nodes and, have you heard of fluently before, it's. Basically an open source agent which, can collect different kind of signals and. So. So. That's what we install on every single node and then. We, also. Give. It like what data to collect so, for example, it, one of these configuration, file for HBase. Or. For. Ll ap, you. Know the car the configuration, file would say hey collect. Collect. The log from, hive. Several two logs for example or or, yarn, log for example, as. Well as gmx, metrics so, this configuration file has the has the definition in terms of what data it needs to be what. Data needs to be collected once. It collects the data then. It. The data gets ingested at the log analytic service that we were showing you at, the time of ingestion data. Gets indexed so that's very easy to search. Easy. To search and clear dashboards, and whatnot and that's, what you you you just saw in those previous slides so. This is how this whole architecture kind, of works you, can extend this architecture. By. Let's. Say if you have applications running, and that's the log that you want to kind of a collect from a cluster you, would just push a configuration. File with. A with. A specific, information to, where your log is and how you want to collect it how you wanna decorate it and then, share, it out to the log analytic service. Okay, so if, we build something like this how much this thing gonna cost. So. This is a like. A typical cost profile one hundred terabyte of data terabyte. Of data and. You, are running a six node cluster. You're. Serving like six concurrent, Connect, concurrent, sessions, all the time. About. A million, queries in a month. Including. The storage cost and is the inside cost that. Would be something like. Nine. Thousand, three hundred forty five dollars just. The. This, just, to think about how should I think about it from a cost perspective. So. With that I we have about four minutes and I'm. Happy to answer any other questions, that you guys might have. So. The question is a hey is Apache, land ugh no it's in a in a preview and we. Plan, on making. It generally available in. Next few months. So. The question is that hey I, saw, that row filter. Would. It support. The are back at the storage level or or at the table level. Yes. So the, so. The so, yeah so yeah you can specify a row level filter, and then, you can assign a, group, and. Then you know every user that belongs to that group would, kind of you, know see that or experienced. Our. Yeah. In agile, Active Directory group that is correct so your groups and users, are synced directly from agile. Active Directory. Sorry. There is a follow-up question and I will get back to you. Not. So, the question is that hey how about the user and groups are fine but then how about the applications, in, this word you, know there is no concept. Like a service principle for example even, if you think about like a hive and you know we we, kind of atonic ate them as like a hive users so, that's what the identities, are as the. Space changes, as the, open source changes will kind of change with it but that's not something that is there right now.

Okay. So yes, so, the question is that hey in a demo you showed me a direct, quitting mod is there. Is there a plan. To support import. Mode as well import map mode is already there. Yeah. Import. Mode is already there it's, just like everybody. Has it that's why I showed you a. More. Different one. Using the rangers set up for like three local, security, so does that user need access to the underlying. Yeah. That's. A great question so, again. There are two types of operation, in the cluster right so one is hey I just want to be able to read. The data like, I want to look at the table and see the data so that's one one, wanna, one. Use case the, other use cases that I want to be able to write the data so maybe I am creating a table and I, need access to the path where I can. Create those tables so, for the first use case where. The. Security can be maintained in a range in itself and you're not actually touching any storage location you're just looking at the you. Know the hive table for example. That. We send me a service. Principal back to the underlying. Storage and. So that works well now. If you come for. An operation, where you will be creating a table, will. Look into a like a storage location in. That case we will pass your user identities. To. The underlying storage and if, you have authorization, at that layer then, you will be able to kind. Of do that work otherwise, you. Know the, permissions will be denied so you need to have access in both places you need to have on, Ranger as well, as underlying data, like store level. All right there is no more question thank, you very much.

2018-05-11 22:55